Introduction

The parameters that define the model architecture are known as hyperparameters, and the process of searching for the best model architecture is known as hyperparameter tuning. When you create a machine learning model, you will be given design options for defining your model architecture. To find the best model architecture for the given problem, you must know how to tune your hyperparameters.

In this article, we will discuss what hyperparameter tuning is, how to find the best hyperparameters, the importance of hyperparameters in machine learning models, optimization techniques, and the difference between parameters and Hyperparameters. Let’s Get Started!

Also, read -> what is a decorator in python and how to use it?

What is Hyperparameter tuning?

The process of selecting a set of optimal hyperparameters for a learning algorithm is known as hyperparameter tuning. However, there are some parameters, known as Hyperparameters, that cannot be learned directly from the regular training process. They are usually resolved before the start of the training process. Hyperparameter tuning is the key to machine learning algorithms. These parameters express important model properties such as the model’s complexity or how quickly it should learn.

Read more about the Hyperparameter definition here: Hyperparameter definition

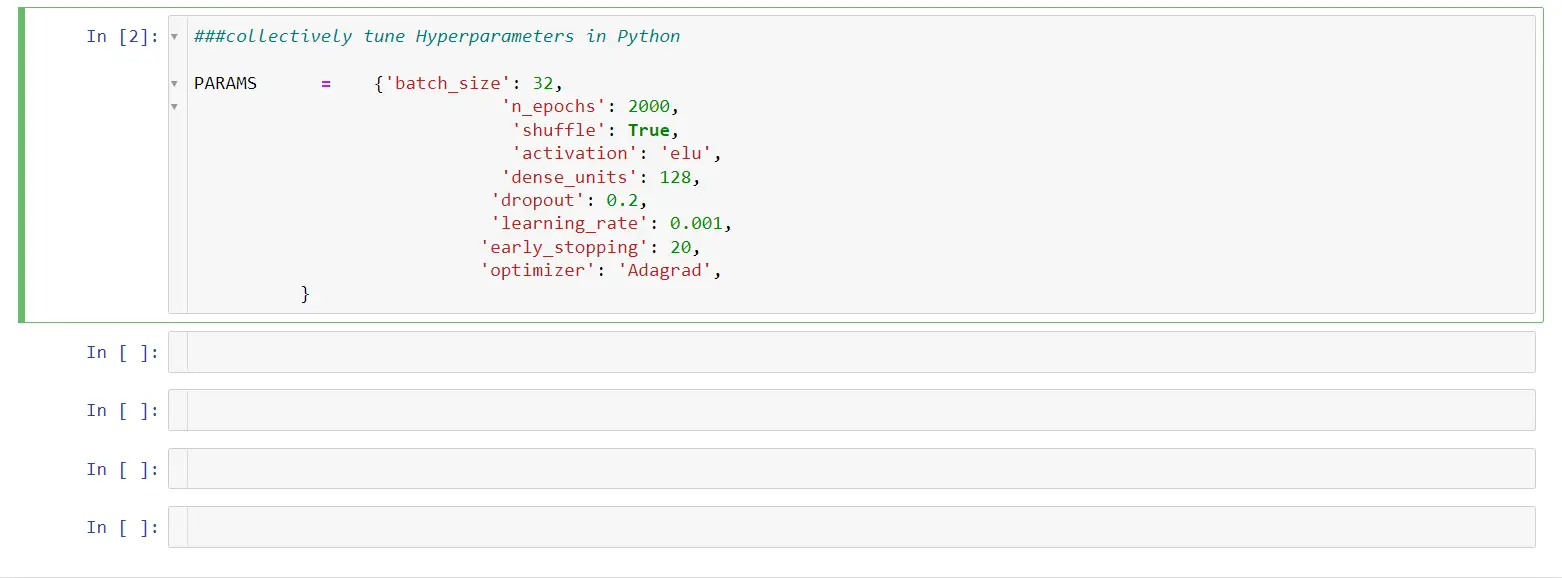

We can see below how hypermeters can be collectively tuned in Python

Why Tuning hyperparameters is important in Machine Learning Models?

Hyperparameter tuning is the process of determining the best combination of hyperparameters to maximize model performance. It functions by combining several trials into a single training session. Each trial is a complete execution of your training application with values for the hyperparameters you specify. When finished, this process will give you the set of hyperparameter values that are best suited for the model to produce optimal results. It is a very important step in any Machine Learning project because it results in optimal model results.

How to find the best Hyperparameters?

There are two ways to find the best hyperparameter tuning based on the understanding of the problem solving for the business use to case to produce the better results.

Those two Hyperparameter tunings are:

- Manual Hyperparameter Tuning

- Automated Hyperparameter Tuning

Manual Hyperparameter Tuning

It is a manually experimenting method, Which involves different sets of parameters.

To manually optimize the tuning of hyperparameters in Python, one can use the Stochastic optimization algorithms instead of a grid search or random search for the hyperparameter optimization. (It takes time but if you can find out the right hyperparameters for your data soon, it is worth the shot if you know the right values to look around)

Other ways to manually optimize the hyperparameters is by using the Perceptron Algorithm or the XGBoost Gradient Boosting algorithm. You can find the required code and the right information for all the three methods i.e.,

- Stochastic Optimization algorithms

- Perceptron Algorithm

- XGBoost Gradient Boosting Algorithm

in this article by machine learning mastery here: Optimize Hyperparameters manually

Hyperparameter Optimization Methods

There are multiple ways of tuning hyperparameters of which the 6 most famous methods are provided with the required resources for you to perform hyperparameter tuning properly and without any errors.

- Grid Search

GridsearchCV from scikit learn can help you automate the process of searching for the best parameters for your data without having to write multiple lines of code. The training model can be fit into the grid search and it will automatically record multiple things like the best parameters, the best score for the models, etc.

Find more about grid search cv here: GridSearchCV

- Randomized Search

The name resonates with what the search is all about. Random search is conducted by the sci-kit learn library to find out the best parameter combinations from the optimized tuning and the number of models trained is lesser than the Grid search.

Find more about the Randomized search CV here: RandomizedSearchCV

- Halving Grid Search

Computationally inexpensive compared to the grid search, the halving grid search works its way on small data samples and moves on to larger samples evaluating the performance of the models based on the hyperparameter tuning.

Find more about the halving grid search here: HalvingGridSearchCV

- HyperOpt-Sklearn

Most of the hyperparameter tuning methods find their way to the Scikit learn library of python while one of the other amazing libraries for the same task is Hyperopt, a Bayesian Optimization library mostly for large scale optimization that needs the optimization of models with hundreds of parameters that can be scaled over multiple cores of the CPU.

Find more about the Hyperopt-Sklearn package here: Hyperopt-Sklearn

- Bayes Search

Bayes optimization techniques are used to search and arrive at the optimized values as soon as possible. The structure of the search space is optimized to get the parameter values as soon as possible. The Bayes search approach uses past evaluation to decide on new candidates to search for.

Find more about it here: Bayes Search

- Halving Randomized Search

Unlike the HalvingGridSearchCV, the successive halving of the samples is conducted on random combinations to arrive at the best parameters in the hyperparameter tuning.

Find more about Halving randomized search here: Halving Randomized Search

Parameters Vs Hyperparameters in machine Learning

To begin, let us distinguish between a hyperparameter and a parameter in machine learning.

Hyper Parameters cannot be used for the model estimation based on the data provided. Whereas the Parameters are used for model estimation based on the data provided.

Like in deep learning, the weights of neural networks are parameters and Hyper Parameters are used to have a high learning rate in the model-building to estimate the better prediction.

| HyperParameters | Parameters |

| Used for estimating the model parameters | Making the predictions |

| Estimated by HyperParameter Tuning | Estimated by optimization using algorithms like Adam, Adagrad e.t.c |

| We can Set it manually | Automatic |

| Hyperparameters decide how efficient is training. | Parameters are found after training, and how the model is performed. |

Conclusion

Hyperparameter tuning is no doubt one of the best ways by which one can make a machine learning model better and it is one of the most used methods in even the toughest machine learning competitions today. No doubt these methods are very useful, but sometimes it’s not the best model but the most useful model that helps. Don’t try to overfit your training dataset in a bid to have a model that outperforms your data.

For more such content, check out our website -> Buggy Programmer