Clustering methods like K-Means vs Hierarchical Clustering are essentially a broad set of techniques for finding subgroups or clusters in a dataset. These observations of data when clustered, are partitioned into distinct groups in a way that the observations within each are quite similar to each other. While observations in different groups are different from each other, the definition of the similarity or differences between two or more groups must be clearly defined.

There is often a domain-specific consideration that is needed to be made on the consideration basis of the data being studied. In this article, we’ll examine two such methods of clustering and see which one of them is a better algorithm to use in a given situation.

Also, read -> What are the Main Differences between K Means and KNN?

K-means vs hierarchical clustering: Comparative analysis

K-Means Clustering

Of the multiple differences between K-means vs hierarchical clustering, a very simple and straightforward approach for partitioning a dataset into ‘K’ distinct, non-overlapping clusters is the K-Means Clustering approach.

To perform clustering using K-Means, one must first specify the number of clusters or ‘K’ and then the K-Means algorithm will assign each observation to one of the ‘K’ clusters, and the results are obtained from a simple and intuitive mathematical process. In words, the formula says that we want to partition the observations into K clusters such that the total within-cluster variation, summed over all K clusters, is as small as possible to ensure the elements within each cluster are as closely knit as possible. This can be done using a distance formula like the Euclidean Distance or the Manhattan distance or in other cases using the Jaccard Distance.

Read more about the K-Means Clustering approach here: K-Means Clustering

Hierarchical Clustering

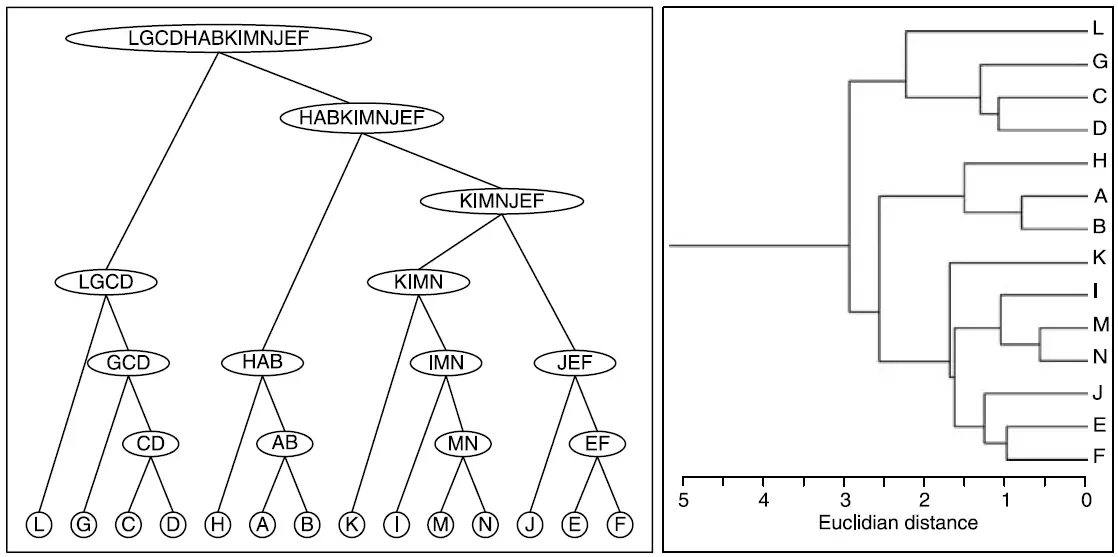

Defining the K-Means clustering algorithm has a very clearly evident disadvantage, where we need to decide on the number of ‘K’ clusters beforehand. This can result in a wrong number of clusters being formed. Here is where the difference between K-Means vs hierarchical clustering comes into the picture. Hierarchical clustering is an alternative approach to forming clusters that has an advantage over the original K-Means method by using a tree-based diagram called the Dendrogram.

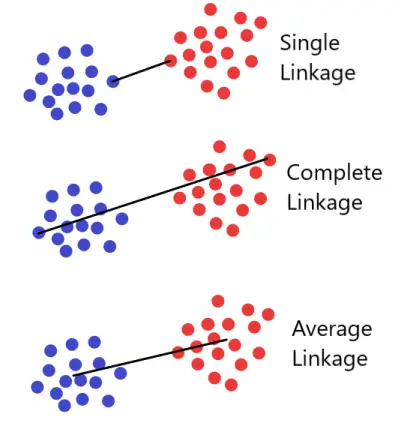

Of the two, K-Means vs hierarchical clustering, the clustering using a dendrogram is obtained via an extremely simple algorithm. We begin by defining some sort of dissimilarity measure between each pair of observations. Most often, Euclidean distance is used. The algorithm proceeds iteratively. The different linkages that can be used in a Hierarchical clustering algorithm are Single Linkage, Average Linkage, or Complete Linkage.

Read more about Dendrograms here: Understanding Hierarchies using Dendrograms

Starting out at the bottom of the dendrogram, each of the n observations is treated as its own cluster. The two clusters that are most similar to each other are then fused so that there now are n−1 clusters. Next, the two clusters that are most similar to each other are fused again, so that there now are n − 2 clusters. The algorithm proceeds in this fashion until all of the observations belong to one single cluster, and the dendrogram is complete.

How to do clustering using K-Means vs Hierarchical clustering in python?

To cluster data using the K-means vs Hierarchical clustering, one can use the Scikit Learn library in python which makes it fairly easy to fit the data into a clustering algorithm and find the right clusters.

K-Means Clustering in Python

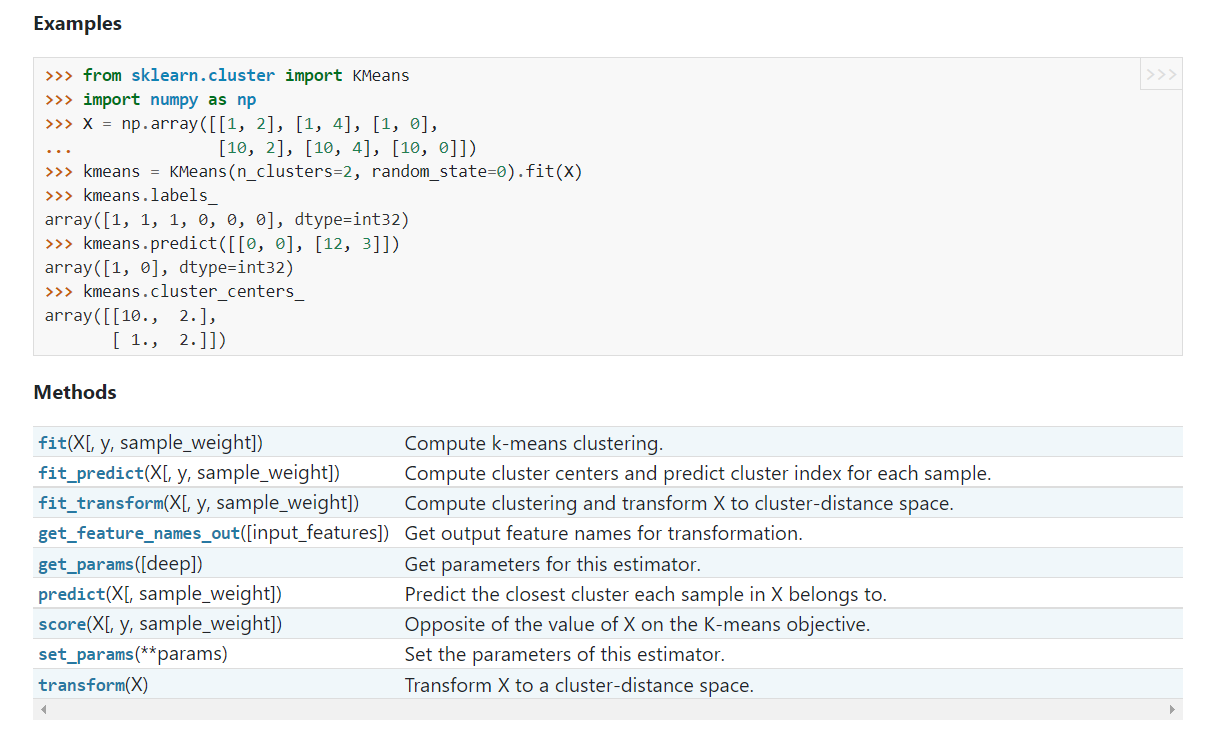

The Scikit Learn library can be used as shown in the picture above to make a clustering algorithm with K-Means Clustering. Find the copyable code below.

Or visit the website: K-Means Clustering Sklearn

>>> from sklearn.cluster import KMeans

>>> import numpy as np

>>> X = np.array([[1, 2], [1, 4], [1, 0],

... [10, 2], [10, 4], [10, 0]])

>>> kmeans = KMeans(n_clusters=2, random_state=0).fit(X)

>>> kmeans.labels_

array([1, 1, 1, 0, 0, 0], dtype=int32)

>>> kmeans.predict([[0, 0], [12, 3]])

array([1, 0], dtype=int32)

>>> kmeans.cluster_centers_

array([[10., 2.],

[ 1., 2.]])Hierarchical Clustering in Python

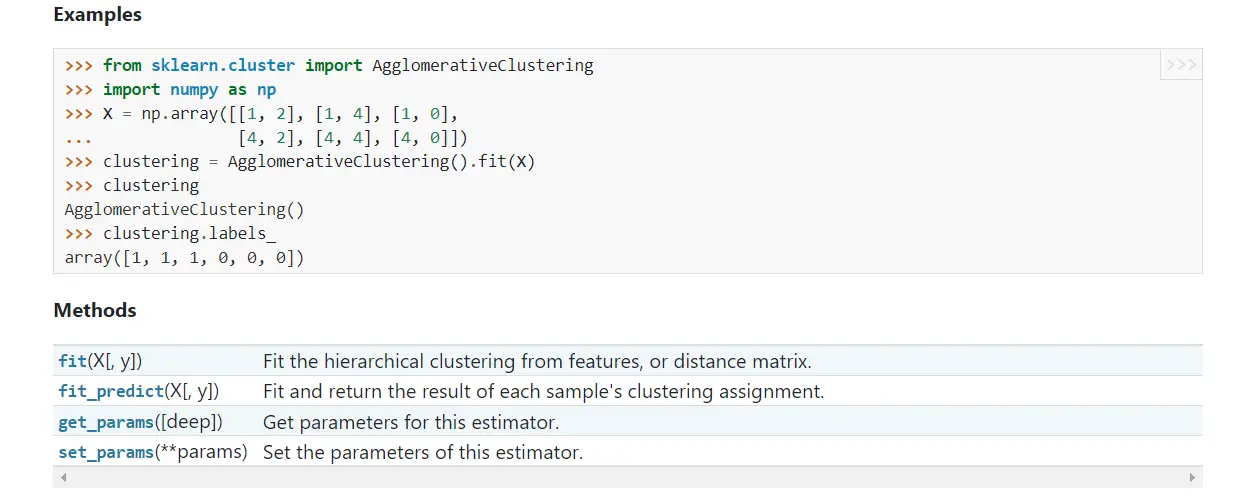

The Scikit Learn library can also be used as shown in the picture above to make a clustering algorithm with Hierarchical or Agglomerative Hierarchical Clustering. As one can observe, the code for Hierarchical clustering is shorter when compared to K-Means vs Hierarchical clustering in python. Find the copyable code below.

Or visit the website: Agglomerative hierarchical clustering

>>> from sklearn.cluster import AgglomerativeClustering >>> import numpy as np >>> X = np.array([[1, 2], [1, 4], [1, 0], ... [4, 2], [4, 4], [4, 0]]) >>> clustering = AgglomerativeClustering().fit(X) >>> clustering AgglomerativeClustering() >>> clustering.labels_ array([1, 1, 1, 0, 0, 0])

Problems with the K-means vs Hierarchical Clustering algorithms:

Both K-means vs Hierarchical Clustering algorithms have small decisions which carry big consequences. Some of the practical issues one might face are provided as follows;

- Deciding if normalization or standardization of variables is necessary or not?

- With Hierarchical Clustering

– The measure of dissimilarity to be used?

– What type of linkage (average, complete, single) should be used?

– Where does the dendrogram have the right number of clusters? - How many clusters are good to have in a K-means algorithm?

Conclusion

Both K-means vs Hierarchical Clustering have their own merits and demerits. While you can go wrong in a K-means clustering algorithm with a random number of clusters, if you know using your domain knowledge about the number of clusters in the dataset, that will be enough for your clustering algorithm to be accurate. With the hierarchical clustering, it’ll be a tough task to determine the right number of clusters without a dendrogram and it takes quite a while to visualize a dendrogram and for a really large dataset, a dendrogram too can become confusing.

All things considered, in the unsupervised learning segments of Machine Learning algorithms, your domain knowledge can make or break your entire analysis, so ensure you do your research on your project before you dive into coding the algorithm.

Try out both the algorithms and let us know in the comments below what you think about K-means vs Hierarchical Clustering.

For more such content, check out our website -> Buggy Programmer.

An eternal learner, I believe Data is the panacea to the world's problems. I enjoy Data Science and all things related to data. Let's unravel this mystery about what Data Science really is, together. With over 33 certifications, I enjoy writing about Data Science to make it simpler for everyone to understand. Happy reading and do connect with me on my LinkedIn to know more!

- Yash Gupta

- Yash Gupta

- Yash Gupta