Hierarchical Clustering is one of the most used unsupervised learning algorithms and therefore it is important to know the types of hierarchical clustering. The algorithms that are used in machine learning are essentially useful to also segment data in companies to segment customers etc. which happens to be one of the most routine tasks to do. Also, in other situations where your data has categories and can be put into different clusters.

In this article, we’ll go over two different types of hierarchical clustering which can be applied based on a given situation to decide which one you can use in your work or projects!

Note: If you know the number of clusters in your data and would like to pre-specify it in your algorithm, try using the K-Means Clustering algorithm where you can specify ‘K’ or the number of clusters beforehand.

Also, read -> What are the Main Differences between K Means and KNN?

What is Hierarchical Clustering?

In a K-Means clustering, there is a possibility that a wrong number of clusters are formed, and therefore, came the need for a more defined approach to making clusters. Here is where hierarchical clustering comes into the picture. Hierarchical clustering is an alternative approach to forming clusters that have an advantage over the original K-Means method by using a tree-based diagram called the Dendrogram and using different methods or linkage systems to group the clusters together.

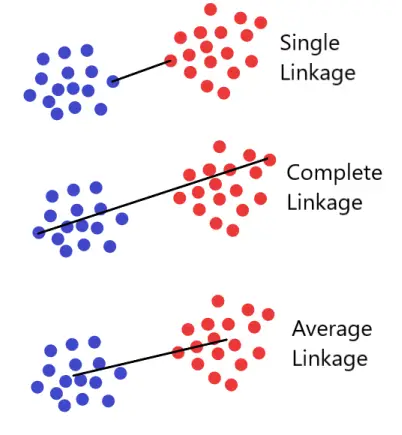

Beginning by defining some sort of dissimilarity measure between each pair of observations, the different types of Hierarchical Clustering are Top-down and bottom-up based on how the division or formation of clusters happens. Most often, Euclidean distance is used which is irrespective of the types of hierarchical clustering being used. The algorithm proceeds iteratively. The different linkages that can be used in a Hierarchical clustering algorithm are Single Linkage, Average Linkage, or Complete Linkage.

For more about Hierarchical Clustering: Hierarchical Clustering

Types of Hierarchical Clustering

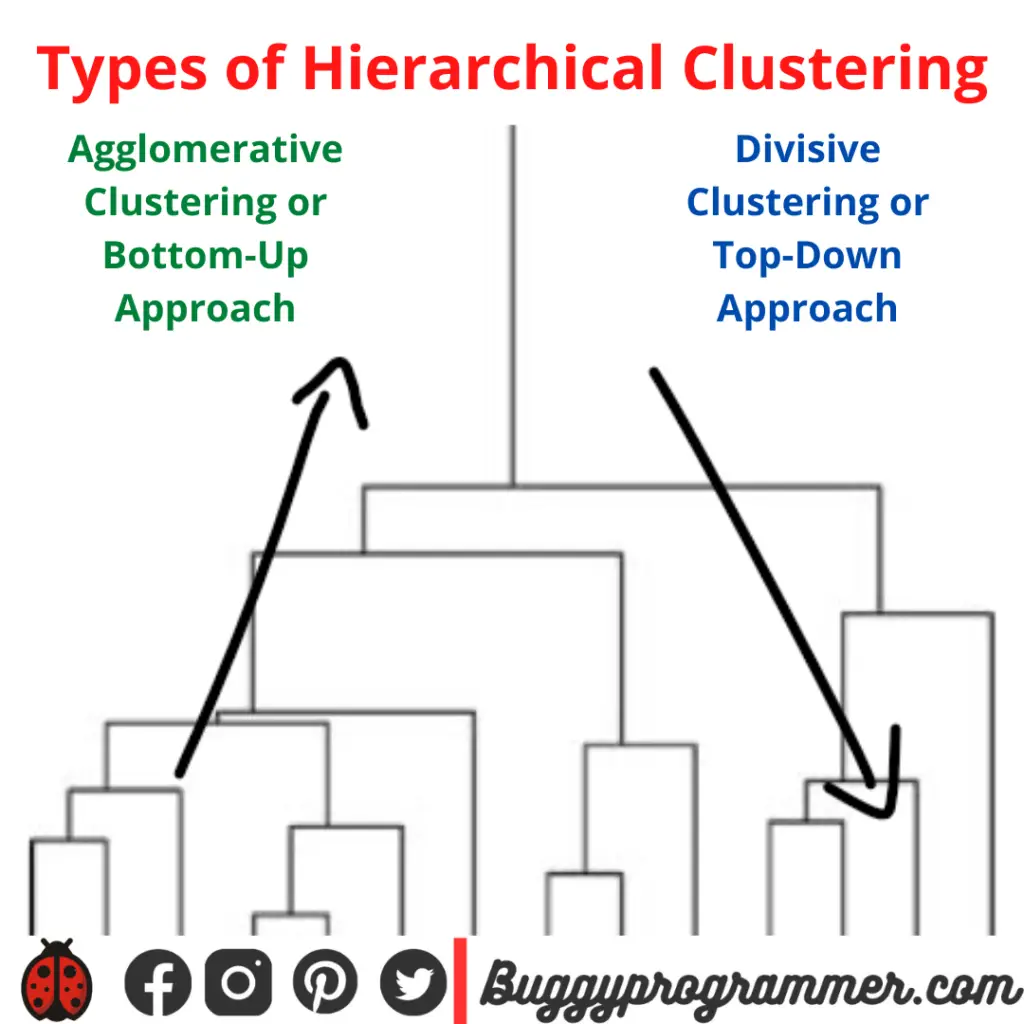

There are essentially two different types of hierarchical clustering algorithms; that are:

- Agglomerative Hierarchical Clustering or Bottom Up Clustering

- Divisive Clustering or Top Down Clustering

Agglomerative Hierarchical Clustering:

Aggloremative or the Bottom up approach is where each observation starts in its own cluster and pairs of clusters are merged together as they move up in the hierarchy. In order to determine the clusters that need to be combine, the measure of dissimilarity between sets of observations is to be decided.

Once you decide the dissimilarity metric, the calculated distances are minimalized using a linkage system to arrive at the best chunks of data based on the linkage system being used which is discussed further in this article. That way, your data is divided basis of the Distance Formulas and the linkage systems to be put together in the Agglomerative or Divisive way.

The metrics that can be used are as follows (to calculate the distances between the elements of the clusters):

- Euclidean Distance

- Squared Euclidean Distance

- Manhattan Distance

- Chebyshev Distance

- Mahalanobis Distance

Divisive Clustering:

Originally started as the DIANA or the Divisive Analysis Clustering Algorithm, all the data is in the same cluster and the largest cluster is split until every object is separated and has its own unique note. The largest cluster is first split and then subsequently the clusters are made smaller and smaller which goes with the name of Divisive or The Bottom Down approach to clustering. The clusters are divided based on the maximum average dissimilarity based on the different metrics that can be chosen from the ones as mentioned above.

How does the clustering work for the types of clusters

Clustering works on the basis of something called ‘Linkage Systems’. The linkage criterion in the different types of hierarchical clustering determines the distance between the sets of observations as a function of the pairwise distances between observations. The observations are commonly used linkage criteria between two sets of A and B can be done in the following way:

- Complete or Maximum Linkage Clustering

- Single or Minimum Linkage Clustering

- Unweighted Average or Simple Average Linkage Clustering

- Weighted Average Linkage Clustering

- Centroid Linkage Clustering

The linkages usually used are Complete, Single or Average clustering which can be visualized as follows;

When to use which one of the types of Hierarchical Clustering?

- When we compare the complexity of Agglomerative Clustering with the Divisive clustering, the complexity of the Divisive Clustering is more than the Agglomerative Clustering and the Divisive Clustering, we need to split the clusters in a flat clustering method until each cluster has its own singleton cluster.

- Divisive clustering performs better than an Agglomerative Clustering algorithm of the two types of hierarchical clustering if you don’t have to make a complete cluster all the way to exhaust each element in your data. The divisive clustering can be done until a point that satisfies the researcher.

- Divisive Algorithm is more accurate, agglomerative clustering makes decisions by considering the local patterns or neighbor points without initially taking into consideration the global distribution of data and these small decisions have high consequences and divisive clustering takes into consideration the entire data only when making the high level splits.

Conclusion

The different types of Hierarchical Clustering i.e., Agglomerative and Divisive are both very useful in a situation with categories or cluster formations when the number of clusters cannot be predetermined. In this case, it becomes imperative for the data scientist to approach the problem at hand with a clear understanding of the two and to use only the right algorithm in their work.

The question is about whether completely picking up the pieces of your data and bringing them together in agglomerative clustering will help or will taking the entire chunk of data and breaking it down into smaller pieces till it is satisfactory using the Divisive clustering help? Once you can choose what’s better for your data, you’ll have the right clusters to work with using either of the two types of Hierarchical Clustering.

For more such content, check out our website -> Buggy Programmer

An eternal learner, I believe Data is the panacea to the world's problems. I enjoy Data Science and all things related to data. Let's unravel this mystery about what Data Science really is, together. With over 33 certifications, I enjoy writing about Data Science to make it simpler for everyone to understand. Happy reading and do connect with me on my LinkedIn to know more!

- Yash Gupta

- Yash Gupta

- Yash Gupta