When we calculate metrics for our machine learning algorithm, there’s no doubt we study the confusion matrix likelihood ratios as a part of it. In machine learning, a confusion matrix, which is also known as an error matrix, is a specific matrix-like layout that allows visualization of the performance of the algorithm.

In this article, we’ll study the Likelihood Ratios of a confusion matrix and how to calculate them.

What are Confusion Matrices?

When calculating the performance of a machine learning model nowadays, everyone focuses on trying to improve the accuracy and precision of the model which are of course, really important metrics but by diving deeper into their metrics and understanding what the additional metrics of a Confusion matrix mean, data scientists can derive more meaning out of their data than ever before.

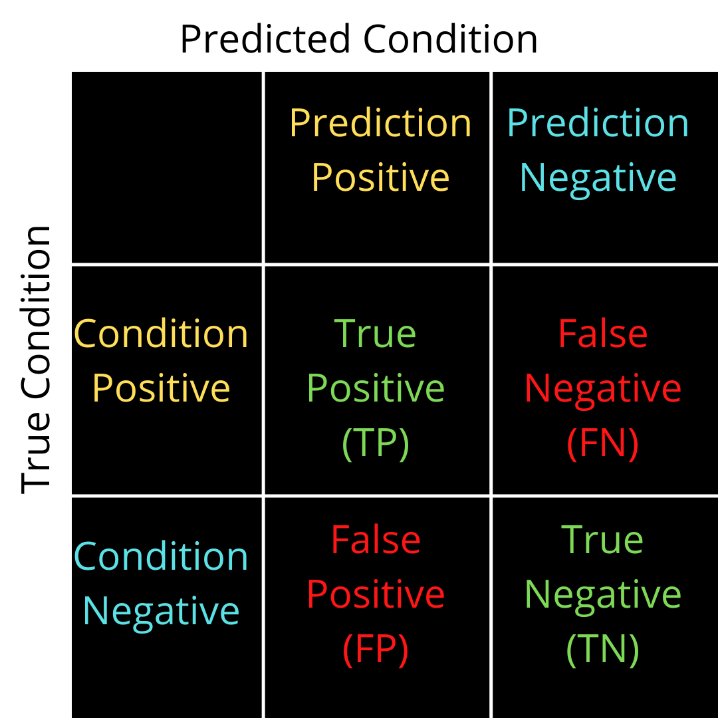

The name comes from the fact that there is confusion on the values predicted or true values and thereby causing errors. There are generally 4 types of values in the confusion matrix comprising of two classes. Class one deals with condition positive where the value can be a True positive or a False positive. Class two deals with the condition of negative values where the value can be a True Negative or a False Negative.

Confusion matrices can help individuals ascertain multiple metrics for their data namely;

- Sensitivity or True Positive Rate

- Specificity

- Precision and Recall

- Likelihood Ratios

- F1 Score

This unique contingency table has two dimensions (“actual” and “predicted”), and identical sets of “classes” in both dimensions.

If you are new to Confusion matrices, Find more about them here -> Confusion Matrices

What are Confusion Matrix Likelihood Ratios?

Likelihood ratios (LR) are generally used in the diagnostic fields in medical testing are used to interpret diagnostic tests. The Likelihood ratios tell you how likely is it that a patient has a disease or condition. The higher the ratio the more likely it is that the patient has the disease or condition and vice versa. Hence, the likelihood ratios can help a physician rule in or rule out a disease.

Interpretation of Confusion Matrix Likelihood Ratios

The value of the confusion matrix Likelihood ratios ranges from zero to infinity. As already mentioned, The higher the value, the more likely the patient has the condition. As an example, let’s say a positive test result has an LR of 9. This result is 9 times more likely to happen in a patient with the condition than it would in a patient without the condition.

A rule of thumb (McGee, 2002; Sloane, 2008) for interpreting them:

0 to 1: decreased evidence for a disease. Values closer to zero have a higher decrease in the probability of disease. For example, an LR of 0.1 decreases probability by -45%, while a value of -0.5 decreases probability by -15%.

1: no diagnostic value.

Above 1: increasing evidence for a disease. The farther away from 1, the more chance of disease. For example, an LR of 2 increases the probability by 15%, while an LR of 10 increases the probability by 45%. An LR over 10 is very strong evidence to rule in disease.

Read more about how to interpret likelihood ratios here: Likelihood ratios and the math behind them

How to calculate Confusion Matrix Likelihood Ratios?

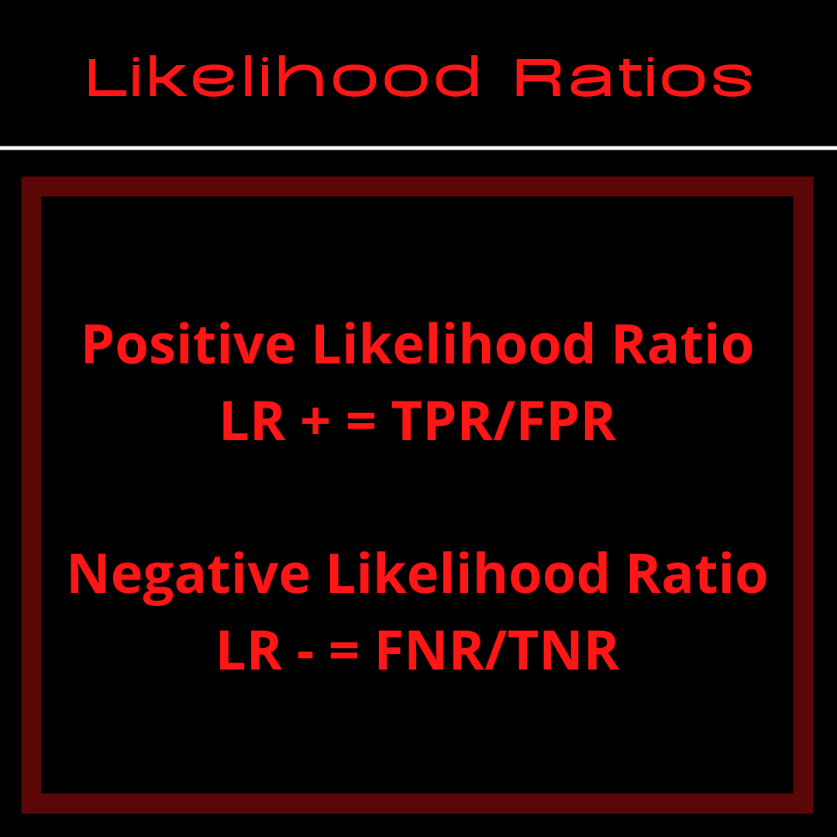

In a confusion matrix, there are two Likelihood Ratios that exist. One is the positive LR and the other is the negative LR.

To calculate the positive LR one will need the True Positivity Rate or the Probability of detection and the False Positivity Rate otherwise known as the Probability of False Alarm. Similarly, the negative LR can be calculated using the False Negativity Rate and the True Negativity Rate. Specificity and Sensitivity are other metrics that can be used to calculate the likelihood ratios.

Note: Using Specificity and Sensitivity; LR can be calculated as follows:

Positive Likelihood Ratio = Sensitivity/(100-Specificity)

Negative Likelihood Ratio = (100-Sensitivity)/Specificity

Calculation of the positive LR using TPR and FPR:

The Positive Likelihood Ratio using the True Positive Rate and False Positive Rate:

LR + = TPR/FPR

Where;

TPR = TP/P = TP/(TP + FN) = 1 – FNR

And

FPR = FP/N = FP/(FP + TN) = 1 – TNR

Calculation of the negative LR using FNR and TNR:

The Negative Likelihood Ratio using the False Negative Rate and True Negative Rate is simply

LR – = FNR/TNR

Where;

FNR = FN/P = FN/(FN + TP) = 1 – TPR

And

TNR = TN/N = TN/(TN + FP) = 1 – FPR

One can use these formulas or just the formulas using sensitivity and specificity to find out the Confusion Matrix Likelihood Ratios easily.

How to find out the Confusion Matrix Likelihood Ratios using Python?

To find accuracy and other metrics is easy by using a confusion matrix in Python. But when you want to find out more than just accuracy and precision, you can use the NumPy library with the confusion matrix function from sklearn for multi-class cases. The code for the same is as follows:

Import numpy as np from sklearn.metrics import confusion_matrix FP = confusion_matrix.sum(axis=0) - np.diag(confusion_matrix) FN = confusion_matrix.sum(axis=1) - np.diag(confusion_matrix) TP = np.diag(confusion_matrix) TN = confusion_matrix.values.sum() - (FP + FN + TP) # Sensitivity, hit rate, recall, or true positive rate TPR = TP/(TP+FN) # Specificity or true negative rate TNR = TN/(TN+FP) # Precision or positive predictive value PPV = TP/(TP+FP) # Negative predictive value NPV = TN/(TN+FN) # Fall out or false positive rate FPR = FP/(FP+TN) # False negative rate FNR = FN/(TP+FN) # False discovery rate FDR = FP/(TP+FP) # Overall accuracy ACC = (TP+TN)/(TP+FP+FN+TN)

Once you’ve acquired the values for TPR, TNR, FPR, and FNR from the code mentioned above, you can use it to find out the Likelihood ratios as required using the formulas given above.

Conclusion

Confusion matrix likelihood ratios and other metrics that are offered by it are really important for any and all ML models (more so for a diagnostic test) because of the important information that they convey. Your model might be the best for the prediction to be made but more than that, it is important to tell your stakeholders what story the data and predictions are telling.

Likelihood ratios are one of the many metrics that are in use from the confusion matrix and therefore, if you think you need more than just likelihood ratios to convey information about your predictions, go ahead and research which metric works best for your model.

Note: As much as the metrics matter, Domain knowledge plays an important role in understanding more about the data story.

Let us know in the comments below if you use the Confusion Matrix Likelihood Ratios in your analysis and how it worked for you!

For more such content, check out our blog -> Buggy Programmer

An eternal learner, I believe Data is the panacea to the world's problems. I enjoy Data Science and all things related to data. Let's unravel this mystery about what Data Science really is, together. With over 33 certifications, I enjoy writing about Data Science to make it simpler for everyone to understand. Happy reading and do connect with me on my LinkedIn to know more!

- Yash Gupta

- Yash Gupta

- Yash Gupta