Using data for data analysis is tedious and if you don’t know when you should do data wrangling vs data cleaning, your entire analysis can go in vain. Using Data cleaning and data wrangling before you start off on an analysis or data exploration is highly important for your analysis to not have any elements that can be detrimental to your outcomes. Clean data is also very easy to understand and your stakeholders are going to understand the data better and eventually make better decisions. The terms are interchangeably used at times but Data Wrangling is a wider term when compared to Data Cleaning.

Also, read -> 3 Biggest data science trends

Data Wrangling vs Data Cleaning: what are they?

Data Wrangling:

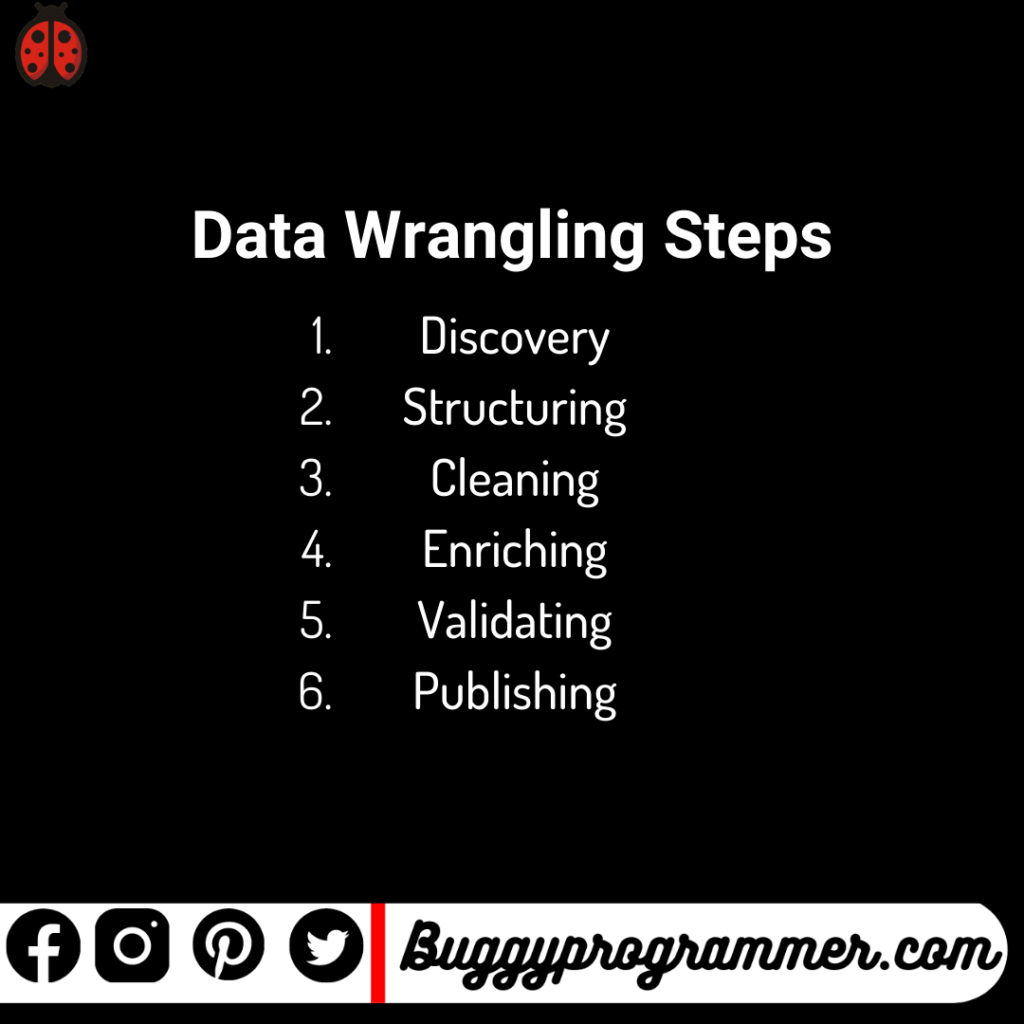

Raw data in most cases, when recorded manually or by taking human inputs at any stage, is not analysis-ready or cannot be funneled into a data pipeline for ETL or analysis processes until the data is prepared for the same to be sent forward. You can although, do this, follow the steps of Data Wrangling as follows;

In a bid to get a deeper intelligence out of multiple data sources at times, and to provide accurate, actionable insights; data scientists tend to spend time collecting, organizing, and cleaning unruly data prior to their analysis. This enables the data scientists to derive more out of the data and then leads to better decision-making by senior leaders in the organization.

The different components of Data Wrangling are as follows;

- Data Acquisition

- Joining Data

- Data Cleansing

Read more about data wrangling here: Data Wrangling

Data Cleaning:

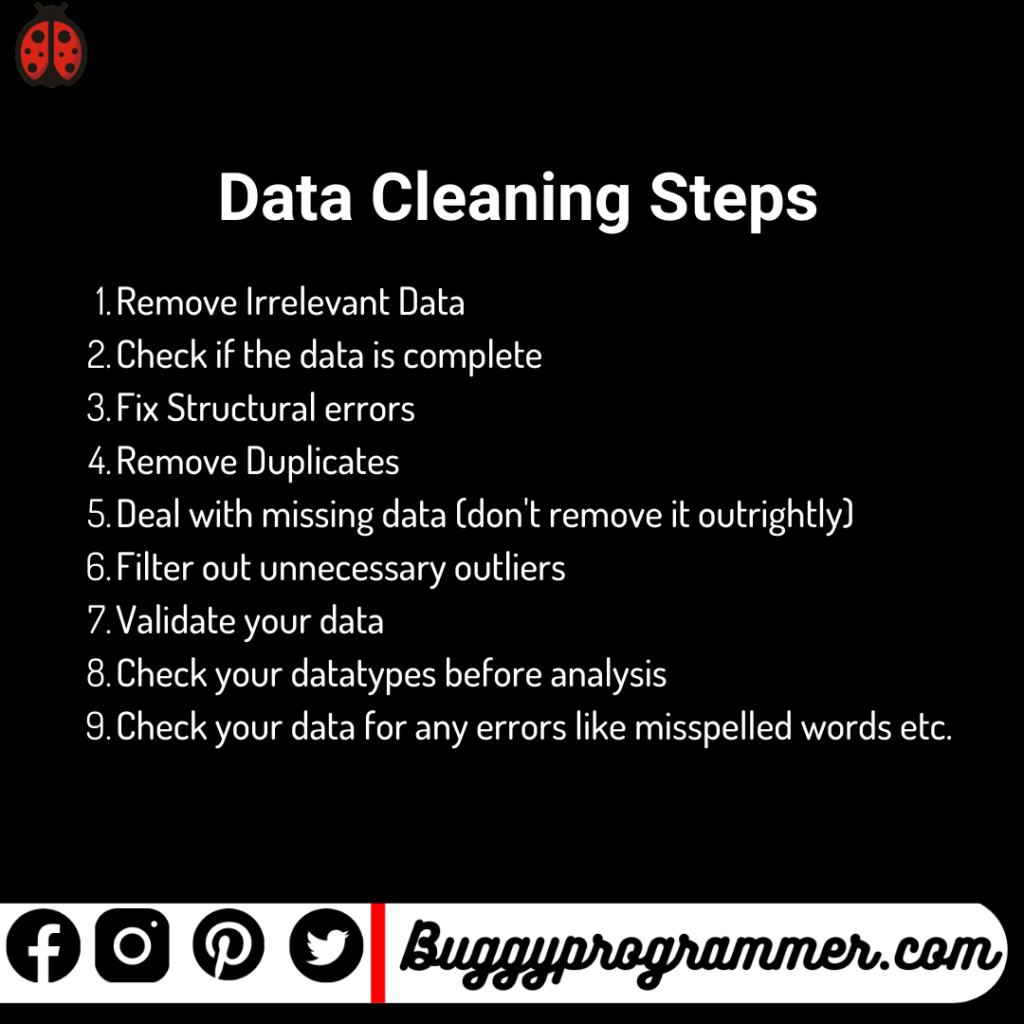

Data cleaning is a narrower concept in Data Wrangling vs Data cleaning and can essentially be called the process of removing inherent errors in data that might distort your analysis or render it, in any way, less valuable. Data Cleaning can come in different forms or steps depending on how the data is collected and the source, including deleting empty cells or rows in an excel file, removing outliers from a dataset by seeing a scatter plot, and/or standardizing or normalizing the inputs.

The idea of data cleaning is to ensure there are as few as possible errors that could influence your final analysis subsequently in your data science life cycle. It is a very time-taking process but the value that one can derive out of a clean dataset is way more than that of unclean data. You can download the image below to refer to when necessary.

Steps of Data Cleaning (not an exhaustive list):

- Remove Irrelevant Data

- Check if the data is complete

- Fix Structural errors

- Remove Duplicates

- Deal with missing data (don’t remove it outrightly)

- Filter out unnecessary outliers

- Validate your data

- Check your datatypes before analysis

- Check your data for any errors like misspelled words etc.

When to do Data Wrangling vs Data Cleaning:

Though they sound similar, the difference between Data Wrangling vs Data Cleaning is that the process of conducting both of them differs. The data cleaning process removes erroneous data from your dataset, while data-wrangling focuses on changing the entire dataset into a more workable or usable format. The latter comes into use when your data is sourced from multiple sources with probably different methods of recording the data or when your data needs to be appended or merged to make complete sense.

The consistency and correctness of your data, in simpler words, are improved by Data Cleaning. On the other hand, the Data Structure and data usability are more done by Data Wrangling.

You probably wouldn’t need to use Data Wrangling of the two i.e., Data Wrangling vs Data Cleaning, if your data is collected automatically from software where the data structure is not compromised. If it is collected in other ways, it is important for you to perform both Data Cleaning and Data Wrangling before you model your data or analyze it.

How to wrangle and clean Data using Python?

Python is one of the two most used programming languages for data analysis and statistical modeling. It is also driving the future of data science. Python is fairly easy to use and is considered a beginner’s programming language. You can perform both – Data Wrangling vs Data Cleaning – in Python using one of Python’s most used libraries i.e., Pandas.

Pandas in Python is in simple terms, a library used to work with data in tabular form in excel. It can be used for data manipulation, transformation, extraction, mining, etc. The library is versatile and is built on top of NumPy, another highly used library in python.

Some of the capabilities of Pandas in python for Data Wrangling are as follows;

- Data Exploration with .describe and .info codes

- Dealing with missing values with the .isna capabilities

- Reshaping data using the .reshape function

- filtering data using booleans and conditional statements

- other functionalities like Data visualization and dummy variables creations.

- Finding unique values using the .nunique function etc.

Find some of the code here to use in your own analysis:

import pandas as pd

#df is the dataset yo wnat to use

df = pd.read_csv("filename.csv")

df.describe() #to descrive the data and its central tendencies

df.info() #to give information relating to the column's data and datatypes

df.isna().sum() #to check for missing values

df = df.dropna(axis = 0, how = 'any') #to drop columns

df.nunique() #to see unique elements in each column

Find more about Data Wrangling vs Data Cleaning in Python here: Data Wrangling vs Data Cleaning in Python

You can use similar code in the Dplyr and Tidyr packages of R Programming if you use R in your analysis and modeling.

Find more about Data Wrangling vs Data Cleaning in R here: Data Wrangling vs Data Cleaning in R

Conclusion

Often companies invest in Data warehouses and other data cleaning pipelines to ensure their analysts get only cleaned data for their analysis purposes. This ensures that the data is ready-to-use and does not require a lot of time on cleaning. Data Wrangling and Cleaning are the second step of the data science life cycle after data collection and problem statement decisions and take up almost 60% of the entire time spent on a project.

The amount of work required has been repetitively cited by famous data scientists as highly important for producing a production-level model or a high-end analysis which can be used as required by the stakeholders.

Try using the Data Wrangling steps and Data Cleaning steps as mentioned in this article on your data and see how it performs against untidy data.

For more such content, check out our website -> Buggy Programmer

An eternal learner, I believe Data is the panacea to the world's problems. I enjoy Data Science and all things related to data. Let's unravel this mystery about what Data Science really is, together. With over 33 certifications, I enjoy writing about Data Science to make it simpler for everyone to understand. Happy reading and do connect with me on my LinkedIn to know more!

- Yash Gupta

- Yash Gupta

- Yash Gupta