Introduction

Evaluation of a model is an important part of machine learning and it requires reading a lot of metrics. And one of the famous metrics to evaluate a classification model is a confusion matrix which not only sounds confusing but is also quite confusing to a lot of beginners. So today in this article we will see what a confusion matrix is, why it is useful, and how to read it? Hope you will find it helpful, let’s get started.

Overview

- What is Confusion Matrix?

- How to read Confusion Matrix?

- Why Confusion Matrix?

- How to create Confusion Matrix using Scikit-Learn?

- How to plot Confusion matrix?

- Conclusion

What is Confusion Matrix?

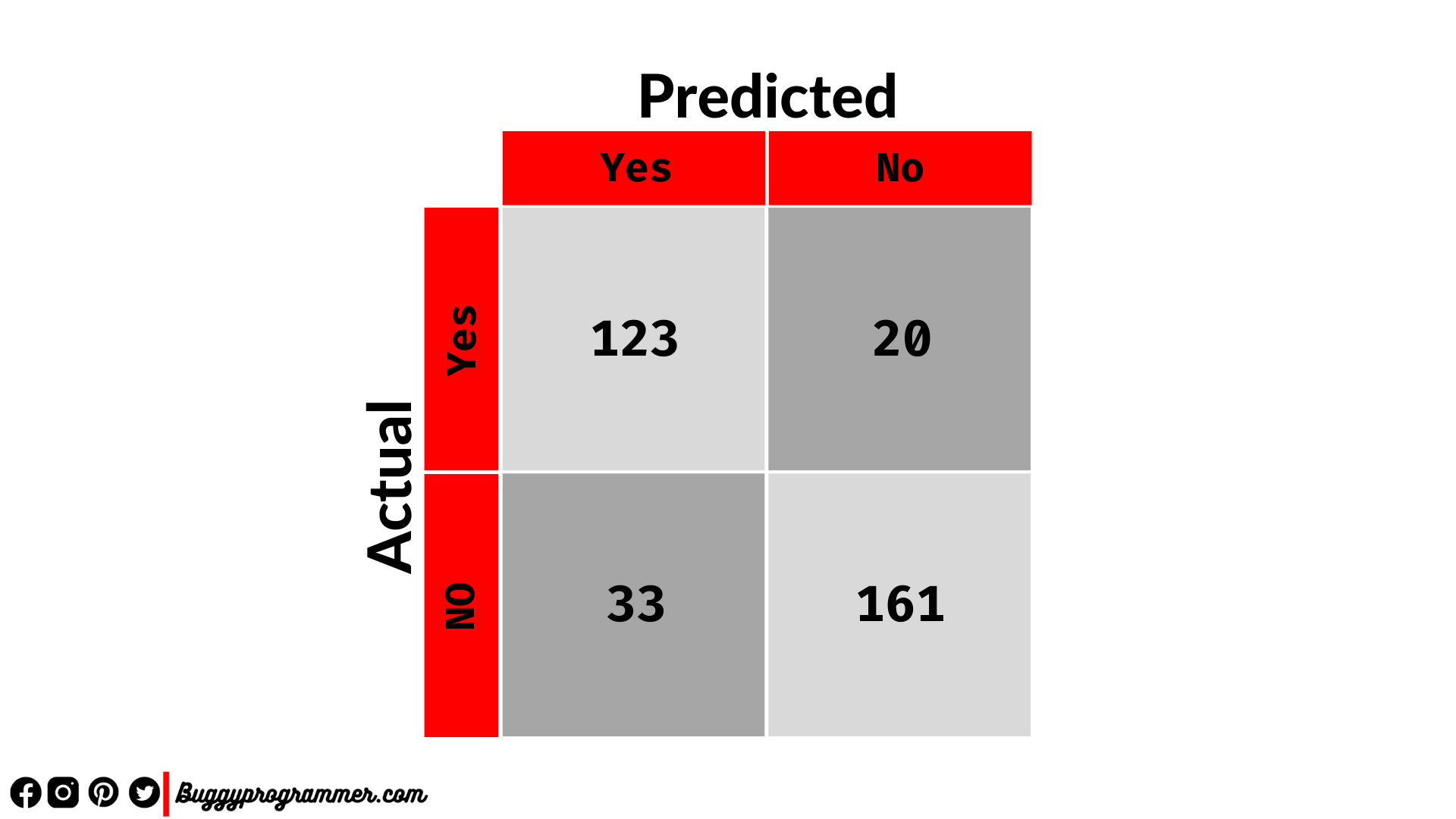

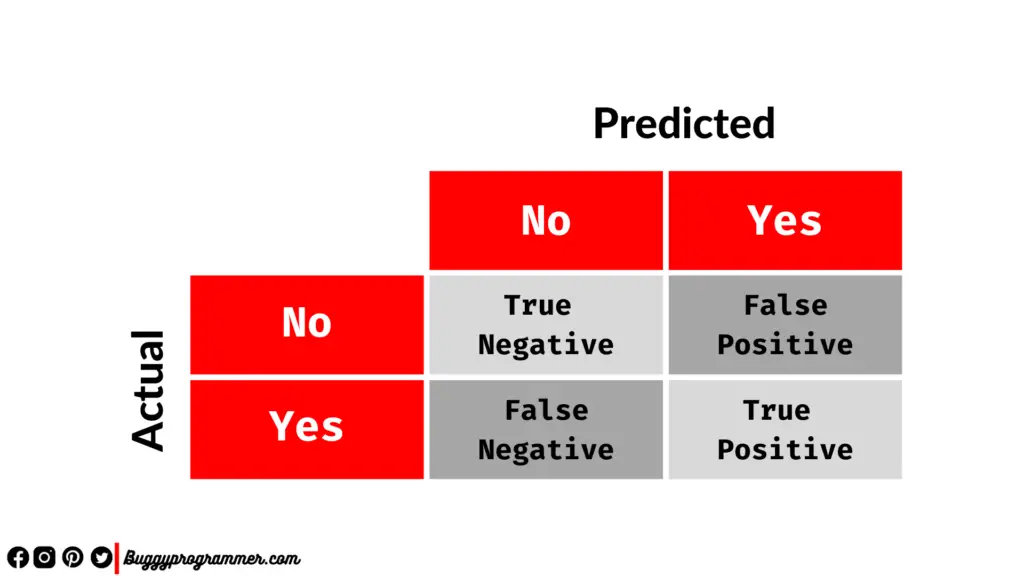

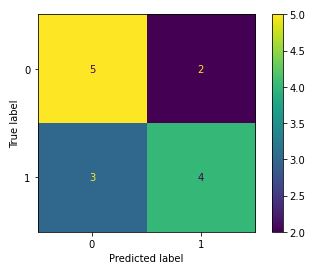

A confusion matrix is an NxN matrix representation of predicted and actual labels, where N is the number of classes that the classification model has to classify. In this matrix, we can see the number of predicted values and actual values against each other in a graphical way. This gives us a very clear way of analyzing how well our model classified individual labels and we can also see what labels our model is not classifying properly. Look at the below image of the confusion matrix of a binary classification

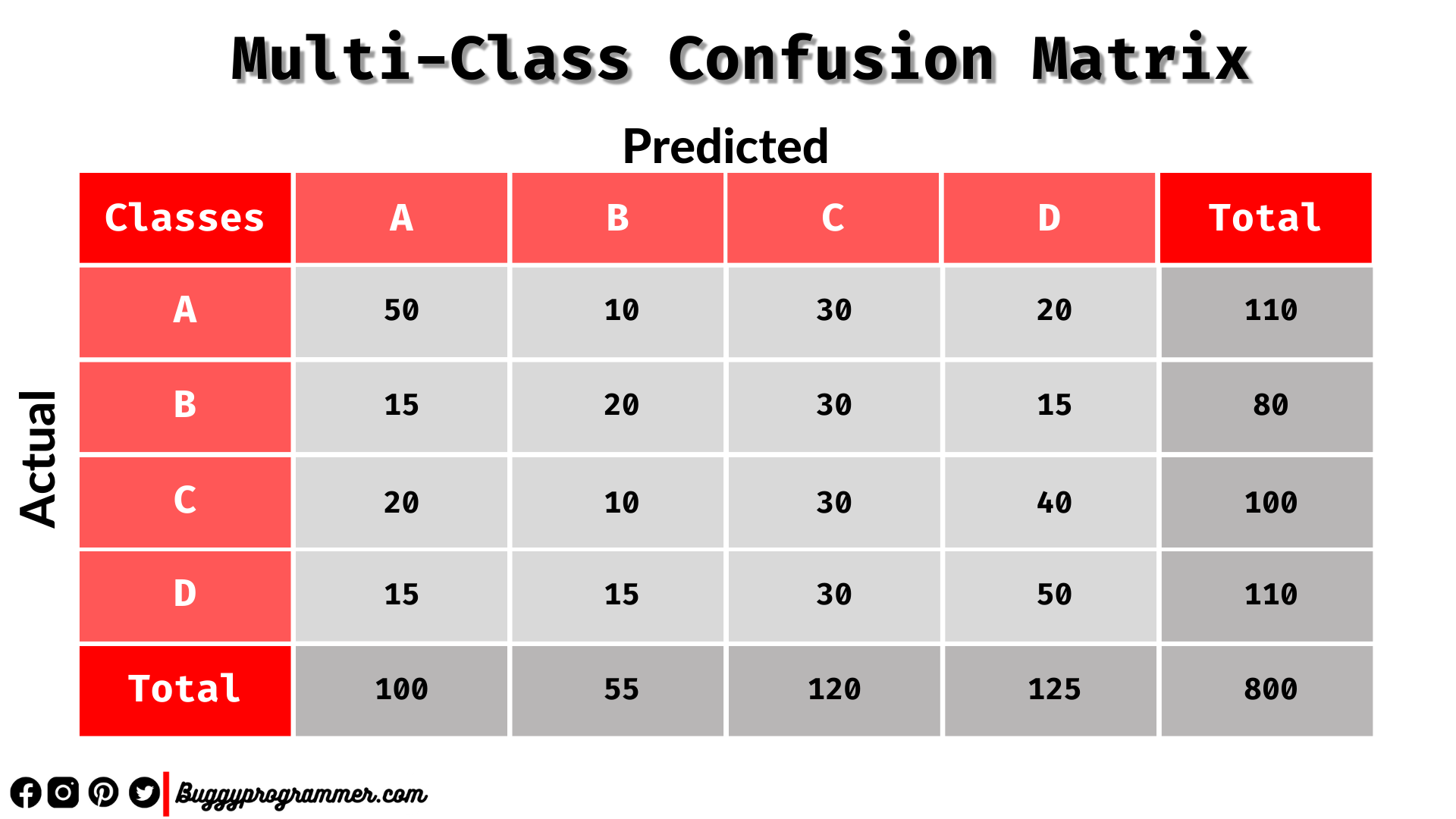

At first glance, this matrix looks very confusing which is one of the reasons why it is called a confusion matrix. The above confusion matrix is a binary classification problem. But confusion matrices can be of multi-class classification problem too. A simple confusion matrix of a multi-class classification problem would like the below one –

How to read Confusion Matrix?

The confusion matrix isn’t confusing as it may seem, in fact, you would love it when you understand its core concept. The main idea behind the confusion matrix is to compare the class’s actual value with the predicted values to see how many values are correctly classified by model and how many are not.

In the confusion, the matrix left diagonal (the one with light grey color) shows a count of correctly classified classes by our model while the right diagonal (the one with dark grey color) shows a count of incorrectly classified classes by our model.

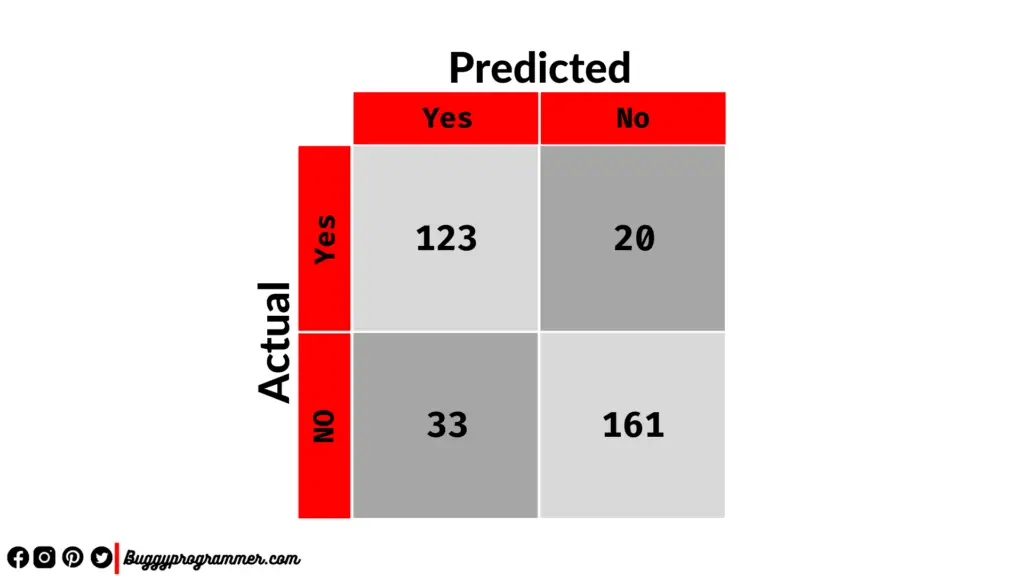

The above image represents an NxN matrix. You can see two rows and two columns. Rows represent the Actual values and the columns represent the predicted values. You can see labels like TN, FP, FN, TP. Let’s see the meaning of each label

| Label | Expansion | Explanation |

| TP | True Positive | If the actual value is positive and the predicted value is also positive, then it is called True Positive |

| TN | True Negative | If the actual value is negative and the predicted value is also negative, then it is called True Negative |

| FP | False Positive | If the actual value is negative and the predicted value is positive, then it is called False Positive |

| FN | False Negative | If the actual value is positive and the predicted value is negative, then it is called False Negative |

Here False Positive(FP) is called Type 1 error and False Negative(FN) is called Type 2 error. More explicitly Type 1 error means that a negative value is falsely predicted as positive and Type 2 error means a positive value is falsely predicted as negative.

Why Confusion Matrix?

When we want to predict a label in a binary class classification problem or in a multi-class label classification problem it is important to know which labels are being predicted correctly and which labels are not being predicted correctly. It is important because our model may predict some labels correctly and may not predict some labels correctly. This can happen due to the imbalances in data and also due to the poor performance of our model. Let’s see an example to understand this more clearly –

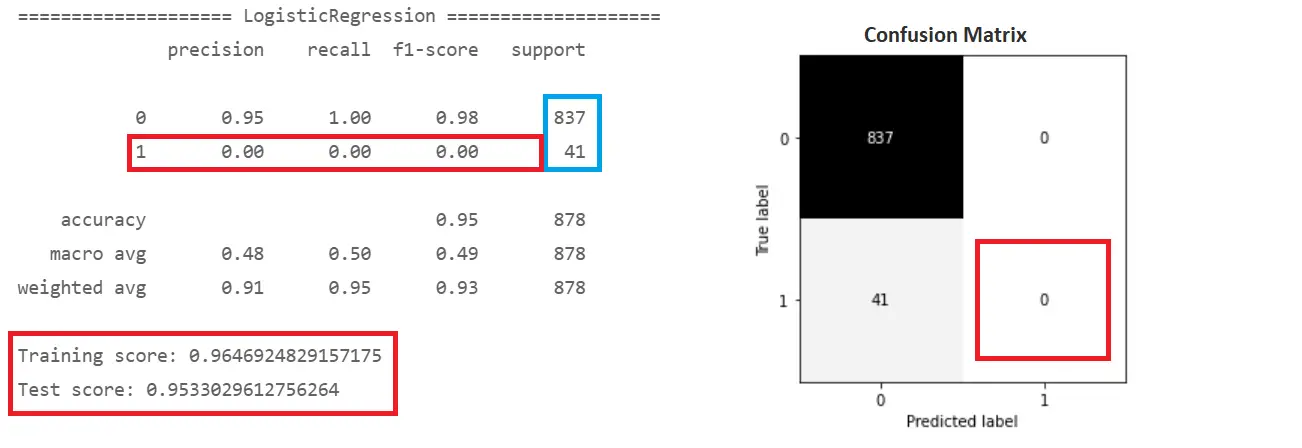

Suppose we want to predict whether it rains today or not. We will use the accuracy metric to evaluate this outcome and see why it fails here and how the confusion matrix enables us to visualize the results better. So take a look at below example

Here the accuracy comes out to be at 96% which means our model is predicting correctly 96 times out of 100. But if you look closely and go with an accuracy of the model by each class you will see that our model has not predicted even a single record as ‘it rains’ but has predicted 837 labels correctly for ‘it doesn’t rain’. Our model is performing well on one aspect and not performing well on other aspects. So if you would have judged your model solely on accuracy then it will have never predicted any record as “it rain”, that’s the reason you should always look for confusion matrix and other matrics.

This imperfection of our model can also be seen from our classification report’s precision, recall, and F1 values. These metrics are calculated for each class based on model performance. Take a look at these metrics for class 0 which is “it doesn’t rain”, you will see it has a precision of 95% and recall of 100% and F1 score is 98%. But on the other hand for our other class, all metrics are 0.

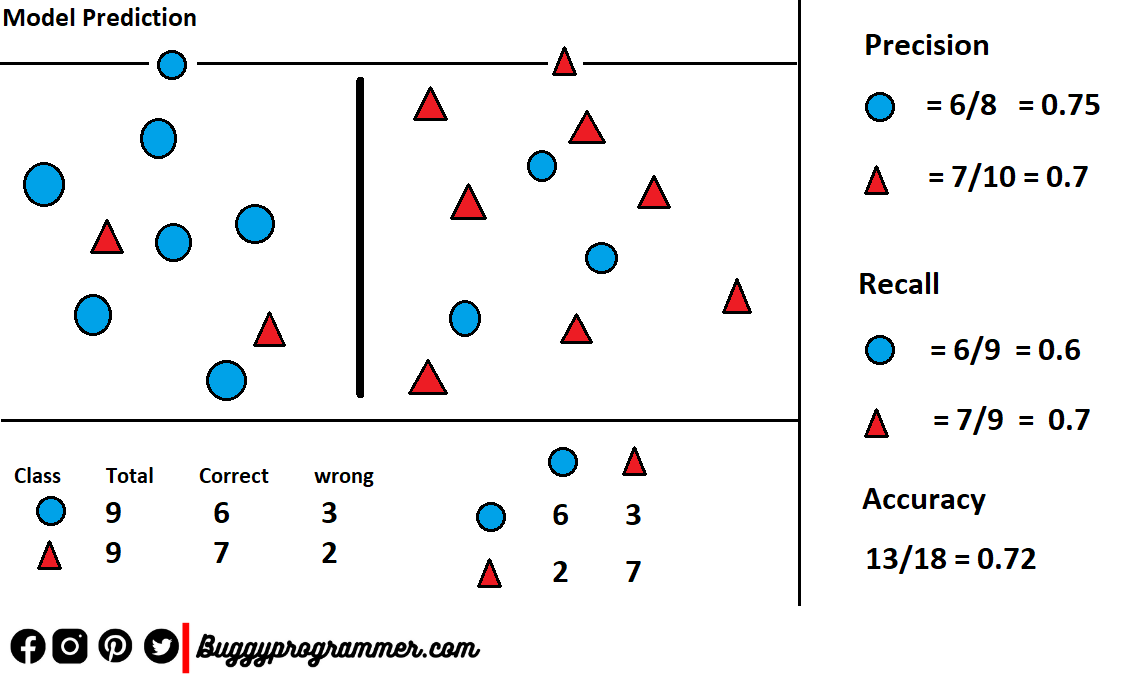

If you don’t what these metrics are then here is a quick definition, Precision is a measure that tells out of all predicted values of class “A” how many actually belong to class “A”, and it can be calculated like this Precision = TruePositives / (TruePositives + FalsePositives). While Recall is a measure that tells out of all values which belong to class “A” how many of them were got predicted correctly by our model, and It can be calculated like this Recall = TruePositives / (TruePositives + FalseNegatives). See the picture below

How to create Confusion Matrix using Scikit-Learn?

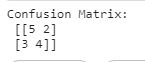

We create a confusion matrix using the scikit-learn confusion_matrix function. It takes your actual and predicted data as an input and returns a confusion matrix. See the below example

from sklearn.metrics import confusion_matrix

cm = confusion_matrix(actual, predicted)

print('Confusion Matrix:\n',cm)

How to plot confusion matrix?

Now we can plot the confusion matrix directly using the ConfusionMatrixDisplay function as shown below

import matplotlib.pyplot as plt from sklearn.metrics import ConfusionMatrixDisplay disp = ConfusionMatrixDisplay(confusion_matrix=cm) disp.plot() plt.show()

Also read: Decision Tree vs Random Forest

Conclusion

We saw how to create a confusion matrix using scikit-learn and also we discussed the need for a confusion matrix and how to read it. The confusion matrix plays an important role in classification tasks to visualize the performance of the model. Hence it is very important to know the terms involved in it and also we should know the right way to read it.

Data Scientist with 3+ years of experience in building data-intensive applications in diverse industries. Proficient in predictive modeling, computer vision, natural language processing, data visualization etc. Aside from being a data scientist, I am also a blogger and photographer.

- Aman Kumar

- Aman Kumar

- Aman Kumar

- Aman Kumar

- Aman Kumar

- Aman Kumar

- Aman Kumar

- Aman Kumar

- Aman Kumar

- Aman Kumar

- Aman Kumar

- Aman Kumar

- Aman Kumar