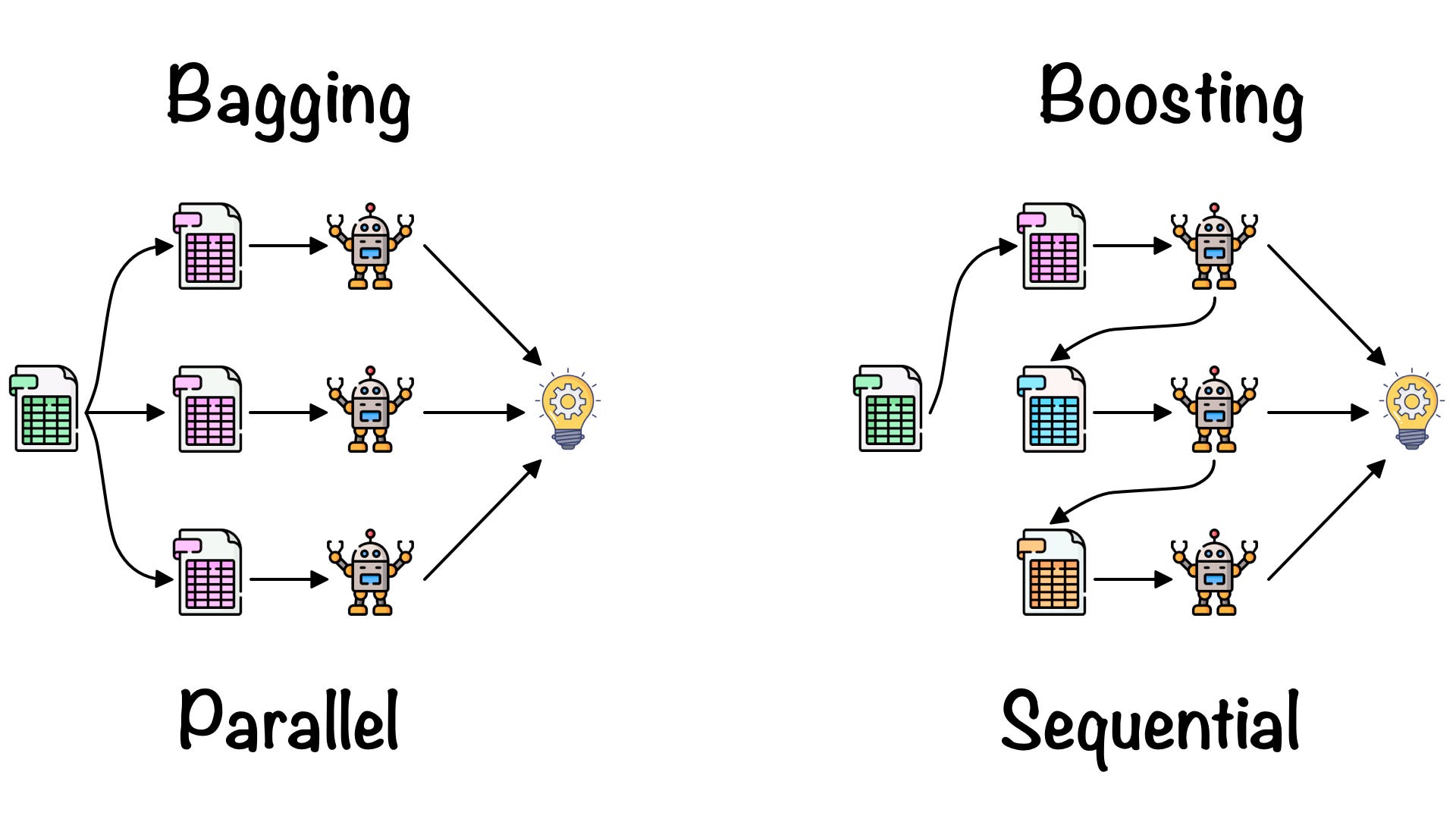

Bagging and boosting are two popular methods of ensemble learning, in which multiple weak models(any individual models) are combined together to generate a strong model. Both of these techniques are used to improve the performance of a single model. So, let’s see them in detail

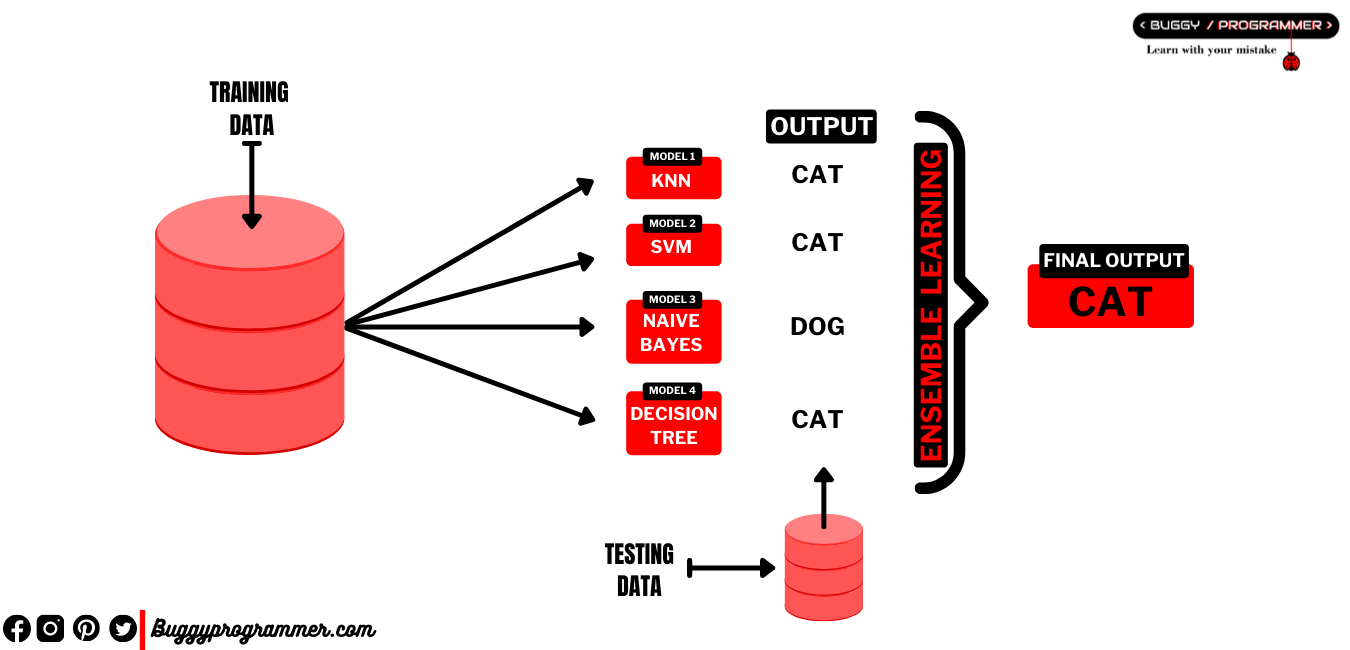

What is Ensemble learning?

Ensemble learning is a technique of combining multiple models for improving the model performance. The idea of ensemble learning is simple, instead of relying on and using one model for a problem, it uses multiple models for a single problem and each output or prediction is made on the basis of votes from each model. The value with majority votes is elected as the final output

Read more → What is ensemble learning and how it works?

What is Bagging?

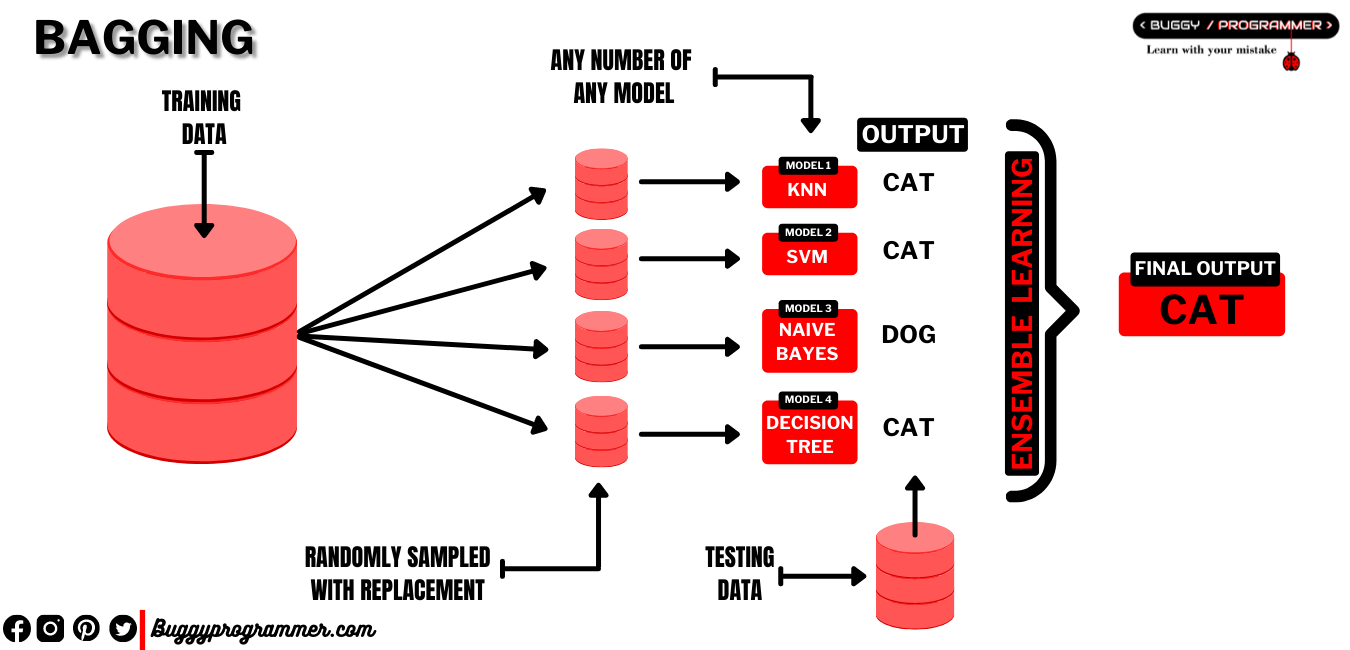

Bagging which is actually an acronym for ‘Bootstrap Aggregation’ is an ensemble method. It is used when a model is facing overfitting issues, it decreases the variance of the model to reduce the overfitting. Bagging is a parallel ensemble method that fits the weak learners (all individual models which are combined together to form an ensemble model) parallely to encourage the independence between the weak layer., balance the bias variance tradeoff.

How Bagging works?

In bagging, instead of creating a single model and training it on our whole training data, which we normally do. We create multiple weak learners or you can say models which are trained on a subset of training data. These numbers of models and the size of the subset of data that are fed to the model are decided by the practitioner.

Also these subset of data from training data are sampled randomly with replacement, for each new model. Here “with replacement” means a sampled subset of data which can have duplicate records.

These sampled data are fed to weak learners for training, which all are combined together to form a strong learner. And while testing strong learner for any output, it collects the output for the same problem from all weak learners and returns a value who has the highest vote (if it is a classification problem) or an average of them (if it is a regression problem).

What is Boosting?

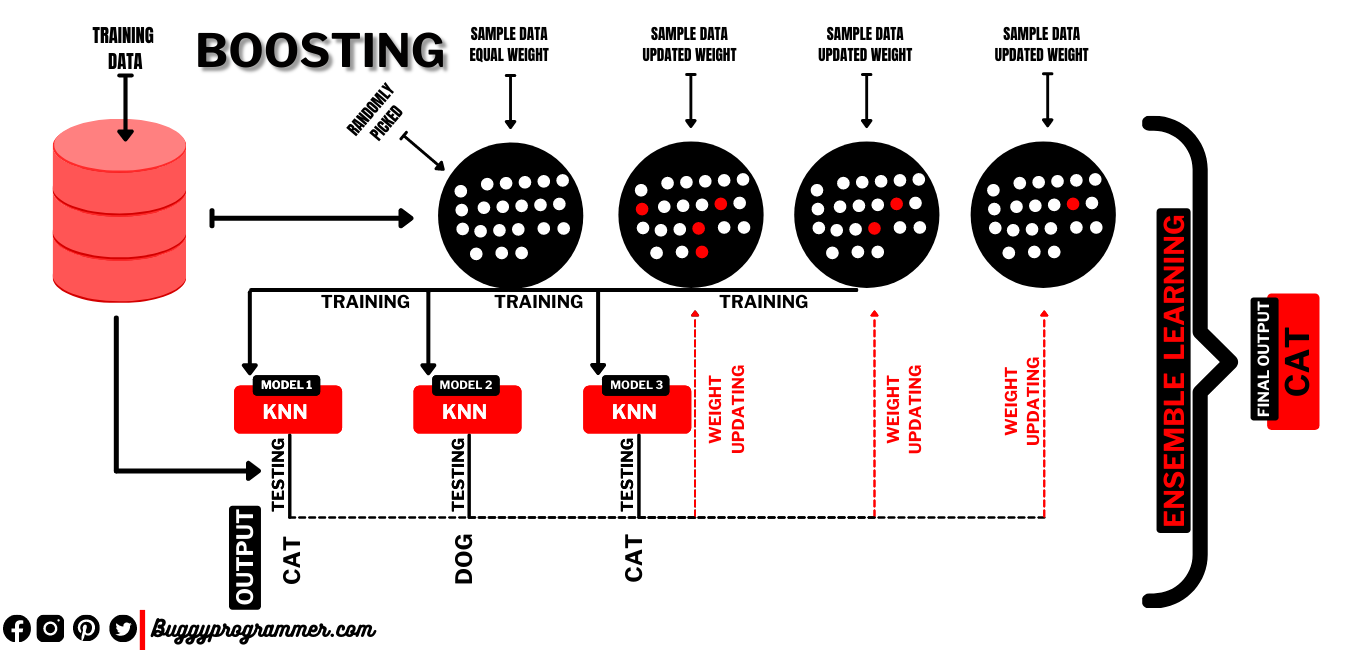

Boosting is a sequential ensemble learning method that generates weak learners in a sequential format. Which decreases bias error and combines the effort of all weak learners to build a strong predictive model. It is used for increasing the performance of underfitting models.

Also unlike bagging technique, boosting encourages the dependence between the weak learners meaning here weak learners take the help from previous weak learner’s effort. Which converts a weak learner into a strong learner.

How Boosting works?

Boosting works quite differently from bagging. Everything works pretty much the same as the bagging, we also train multiple weak learners or models. But we don’t feed them randomly sampled subset of training data like bagging. Instead we use a technique called weighting for data sampling.

The Idea of weighting is simple i.e, every record is assigned to a specific weight. And while data sampling we first choose the records which have higher weights than others, after that we randomly choose other records if required.

So first we give equal weight to every single record in training data, which makes them equally likely to be picked while data sampling. This means in the first step after equal weight assigned to every record, we literally sample the whole training dataset randomly. Which are fed to a weak learner for training, and after training we test it on the entire training dataset. This returns some records which it was not able to predict correctly.

We take those records and increase their weights. And these records are picked first on next data sampling for next model training because of its high weight and then the rest are picked randomly if needed (for eg if you want 50 records and 5 of them have high weight then they will be picked first then rest 45 will be picked randomly).

After training, this model is tested again, weight of data is updated again and this cycle keeps on going for the number of models it is trained for which is chosen by the practitioner.

And just like bagging when all training processes of weak learners are done, they are combined together to form a strong learner. And while testing it uses averaging(for regression) and voting(for classification) techniques to find out correct output from all different outputs from all weak learners.

Difference between bagging and boosting?

| Bagging | Boosting |

|---|---|

| Bagging is a parallel ensemble learning method | Boosting is a sequential ensemble learning method |

| All weak learners in it are independent to each other | All weak learners in it are dependent to each other |

| It decreases the variance of a model | It decreases the bias of a model |

| It is used for decreasing overfitting of a model | It is used for boosting under fitted model |

| In bagging data sampling is done randomly with replacement | In boosting data sampling is based on weight assigned to each records with replacement |

Data Scientist with 3+ years of experience in building data-intensive applications in diverse industries. Proficient in predictive modeling, computer vision, natural language processing, data visualization etc. Aside from being a data scientist, I am also a blogger and photographer.

- Aman Kumar

- Aman Kumar

- Aman Kumar

- Aman Kumar

- Aman Kumar

- Aman Kumar

- Aman Kumar

- Aman Kumar

- Aman Kumar

- Aman Kumar

- Aman Kumar

- Aman Kumar

- Aman Kumar