Introduction

Image segmentation is the process of dividing images into many layers, each represented by a smart, pixel-wise mask. It entails combining, blocking, and splitting images based on their integration level. The initial step in image processing is to divide a picture into a collection of Image Objects having similar features, which can reduce the complexity of the image and thus make image analysis easier.

To split and group a specific set of pixels from an image, we use various image segmentation algorithms. Applications of image segmentation are Medical Imaging, Face detection, autonomous self-driving cars e.t.c. Let’s start the discussion on image segmentation and its types of approach, why it is needed, and implementation using OpenCV and Scikit-learn techniques using python.

Overview

- What is Image segmentation ?

- Why image segmentation is needed?

- Types of Image segmentation

- Different Image Segmentation Techniques

- Implementation of image segmentation using python

- Which image segmentation method is better?

- conclusion

What is Image segmentation ?

Image segmentation aids in determining the region of interest (ROI) in an image. It is the process of dividing an image into different sections. Image Objects are the parts of the image that are divided. It is done based on image properties such as similarity, discontinuity, and so on. The goal of image segmentation is to simplify the image so that it can be analyzed more easily. It is the process of labeling each pixel in an image.

Image segmentation is an extension of image classification in which we perform localization in addition to classification. Image segmentation is thus a subset of image classification, with the model pinpointing the location of a corresponding object by outlining the object’s boundary.

Why image segmentation is needed?

Image segmentation is needed because of its applications and delivering good output results:

Image segmentation is also useful in some medical imaging applications. Highly skilled physicians spend hours determining some regions of medical images to indicate salient regions in medical image analysis. With the right image segmentation approach, this procedure can be completed in seconds.

Image Segmentation is required in various different scenarios like classifying objects, recognizing patterns, OCRs, Number Plate Recognition, CBIR (Content-Based Image Retrieval).

Types of Image segmentation

Image segmentation is divided into two groups :

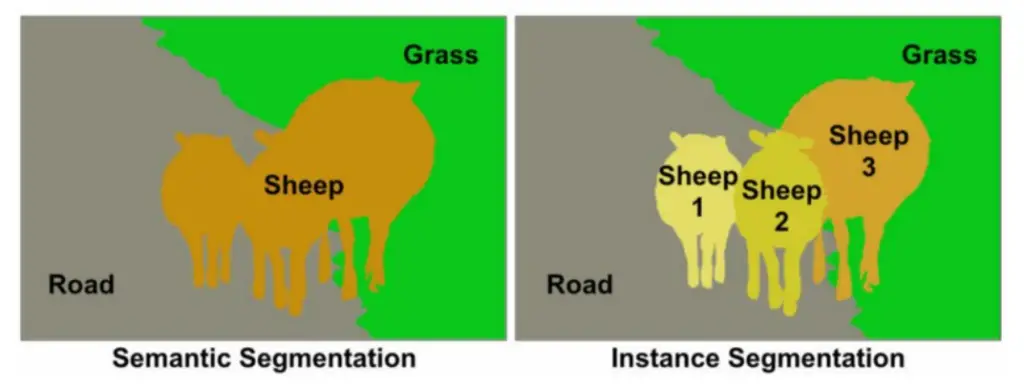

Semantic Segmentation

The classification of pixels in an image into semantic classes is referred to as semantic segmentation. Pixels belonging to a specific class are simply classified as belonging to that class, with no other information or context taken into account. When there are multiple instances of the same class in the image, as one might expect, it is an ambiguous problem statement. Semantic segmentation, or image segmentation, is the task of clustering parts of an image together that belong to the same object class.

Semantic segmentation is recognizing the image at pixel level and understanding it.

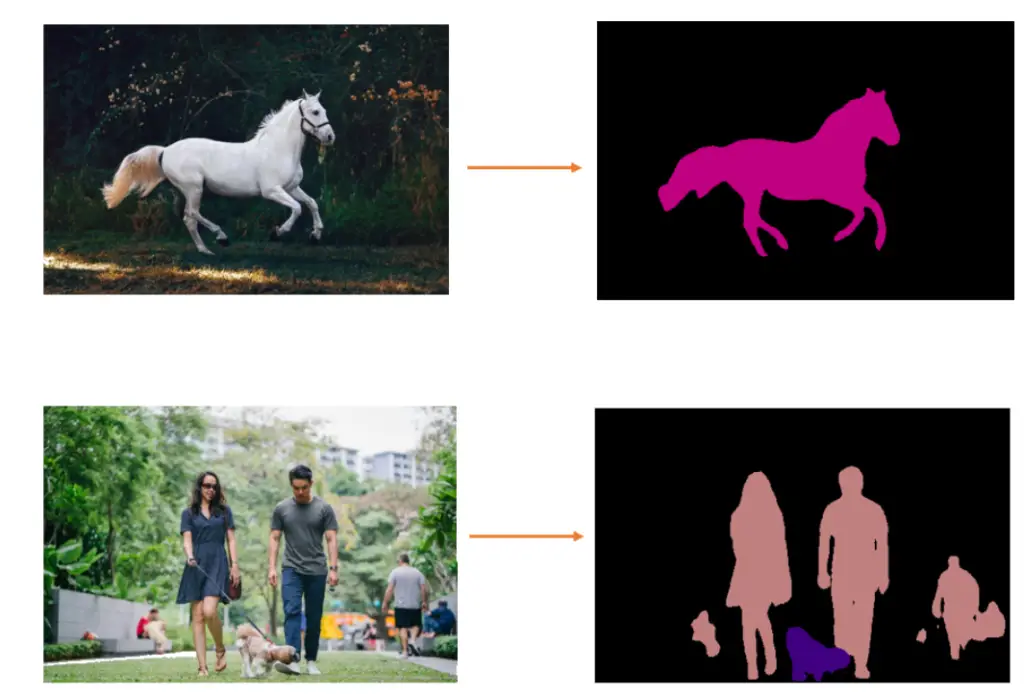

Below, the horse image is segmented without background. In semantic segmentation, human class and dog class are segmented. There is no differentiation between men and women in the human class and generalized as a human class.

Instance Segmentation

Instance segmentation models categorize pixels based on “instances” rather than classes. An instance segmentation algorithm does not know what class a classified region belongs to, but it can separate overlapping or very similar object regions based on their boundaries. If we feed the same image of a crowd that we discussed earlier to an instance segmentation model, the model should be able to separate each person from the crowd as well as the surrounding objects (ideally), but it will not be able to predict what each region.

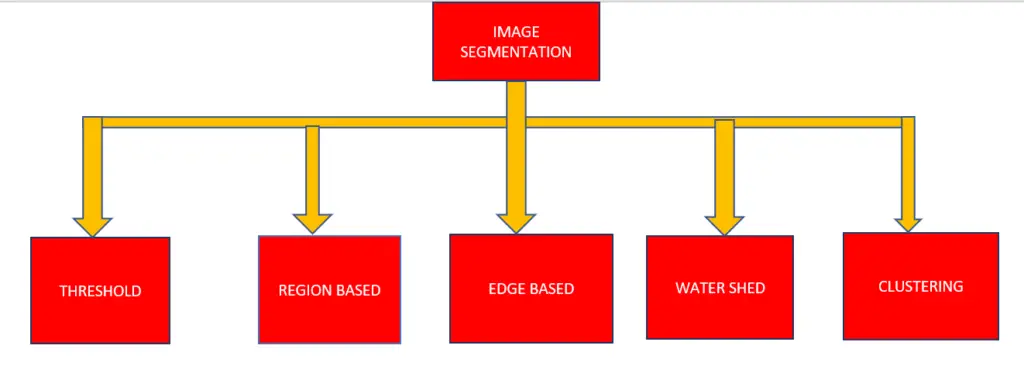

Different Image Segmentation Techniques

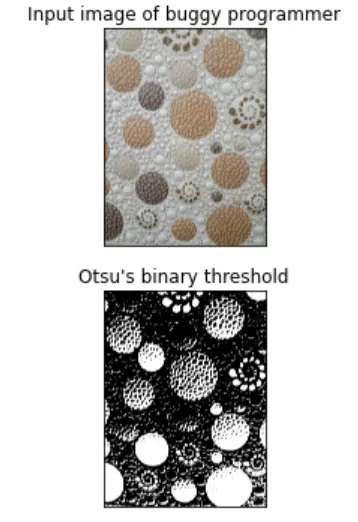

Thresholding based Method

Thresholding is a simple method of image segmentation in which a threshold is set to divide pixels into two classes. Pixels with values greater than or equal to the threshold value are set to 1, while pixels with values less than or equal to the threshold value are set to 0.

As a result, the image is converted into a binary map, a process known as binarization. Image thresholding is very useful when the difference in pixel values between the two target classes is very large and an average value is easily chosen as the threshold.

import numpy as np

import cv2

from matplotlib import pyplot as plt

img = cv2.imread(r'C:/Users/omkar/BUGGY_PROGRAMMER/img1.jpg')

b,g,r = cv2.split(img)

rgb_img = cv2.merge([r,g,b])

gray = cv2.cvtColor(img,cv2.COLOR_BGR2GRAY)

ret, thresh = cv2.threshold(gray,0,255,cv2.THRESH_BINARY_INV+cv2.THRESH_OTSU)

"""noise remoiving the image"""

kernel = np.ones((2,2),np.uint8)

#3opening = cv2.morphologyEx(thresh,cv2.MORPH_OPEN,kernel, iterations = 2)

closing = cv2.morphologyEx(thresh,cv2.MORPH_CLOSE,kernel, iterations = 2)

"""background area"""

sure_bg = cv2.dilate(closing,kernel,iterations=3)

""" to find Sure foreground area"""

dist_transform = cv2.distanceTransform(sure_bg,cv2.DIST_L2,3)

"""Thresholding"""

ret, sure_fg = cv2.threshold(dist_transform,0.1*dist_transform.max(),255,0)

"""find unknown area / region"""

sure_fg = np.uint8(sure_fg)

unknown = cv2.subtract(sure_bg,sure_fg)

'''labeling and marking'''

ret, markers = cv2.connectedComponents(sure_fg)

'''Add one to all labels so that sure background is not 0, but 1'''

markers = markers+1

''' Now, mark the region of unknown with zero'''

markers[unknown==255] = 0

markers = cv2.watershed(img,markers)

img[markers == -1] = [255,0,0]

plt.figure(figsize=(12,5))

plt.subplot(211),plt.imshow(rgb_img)

plt.title('Input image of buggy programmer'), plt.xticks([]), plt.yticks([])

plt.subplot(212),plt.imshow(thresh, 'gray')

plt.imsave(r'thresh.png',thresh)

plt.title("Otsu's binary threshold"), plt.xticks([]), plt.yticks([])

plt.tight_layout()

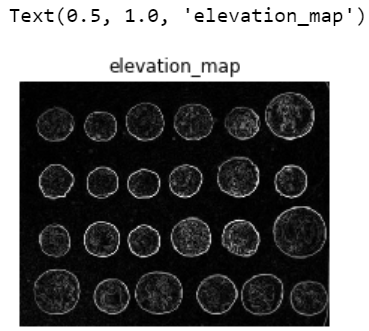

Region-based method

Region-based segmentation algorithms search for similarities between adjacent pixels and group them into a single class.

Typically, the segmentation procedure begins with some pixels designated as seed pixels, and the algorithm works by detecting the seed pixels’ immediate boundaries and classifying them as similar or dissimilar.

The immediate neighbors are then used as seeds, and the process is repeated until the entire image has been segmented. The popular watershed algorithm for segmentation is an example of a similar algorithm that works by starting from the local maxima of the euclidean distance map and growing under the constraint that no two seeds can be classified

import numpy as np

import matplotlib.pyplot as plt

from skimage import data

coins = data.coins()

from skimage.filters import sobel

elevation_map = sobel(coins)

fig, ax = plt.subplots(figsize=(4, 3))

ax.imshow(elevation_map, cmap=plt.cm.gray, interpolation='nearest')

ax.axis('off')

ax.set_title('elevation_map')

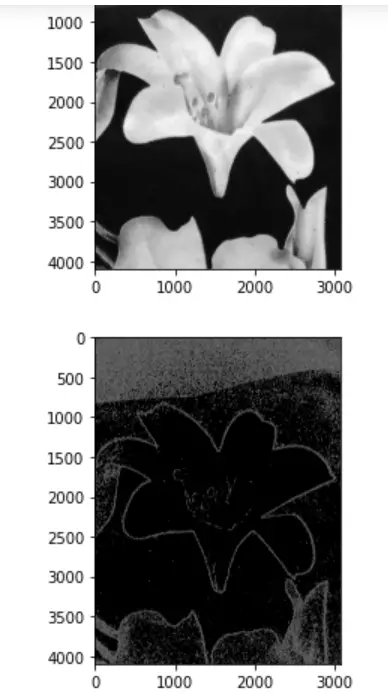

Edge-based method

The task of detecting edges in images is known as edge segmentation, also known as edge detection. Edge detection, from a segmentation standpoint, corresponds to classifying which pixels in an image are edge pixels and classifying those edge pixels accordingly. Edge detection is usually done with the help of special filters that give us the image’s edges after convolution. These filters are computed by dedicated algorithms that estimate image gradients in the spatial plane’s x and y coordinates.

It significantly reduces the size of the image that will be processed and filters out irrelevant information, preserving and focusing solely on the important structural properties of an image for a business problem. Edge-based segmentation algorithms detect edges in images based on differences in grey level, color, texture, brightness, saturation, contrast, and so on. To improve the results, additional processing steps must be performed to concatenate all of the edges into edge chains that correspond better with the image’s borders. One of the most popular edge detection algorithms is the Canny edge.

import cv2

import numpy as np

import matplotlib.pyplot as plt

image = cv2.imread("C:/Users/omkar/BUGGY_PROGRAMMER/flower.jpg")

gray = cv2.cvtColor(image, cv2.COLOR_BGR2GRAY)

plt.imshow(gray, cmap="gray")

plt.show()

edges = cv2.Canny(gray, threshold1=30, threshold2=100)

plt.imshow(edges, cmap="gray")

plt.show()

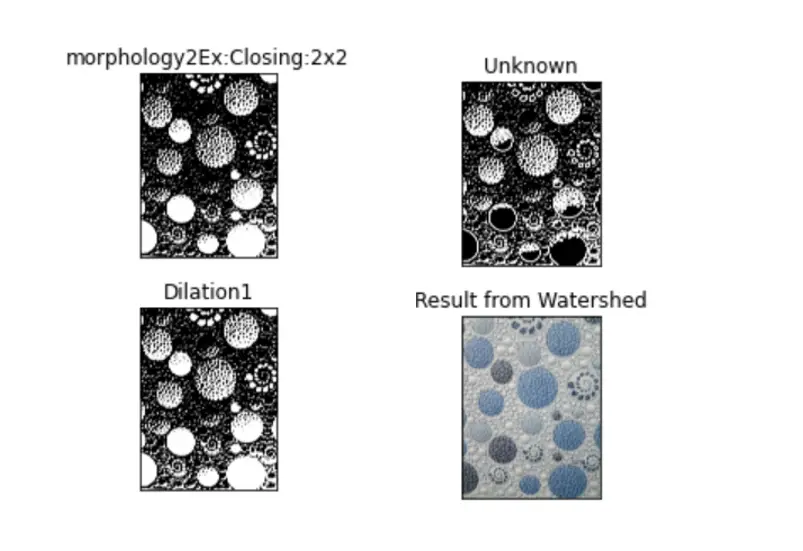

Water-shed based method

A Watershed is a transformation defined on a grayscale image in image processing. The watershed transformation operates on an image as if it were a topographic map, with the brightness of each point representing its height, and finds lines that run along the tops of ridges

It works based on a topological interpretation of image boundaries. The closing operation aids in the closure of small holes within foreground objects or small black points on the object. Morphological dilation increases the visibility of objects and fills small holes in the objects. The Euclidean distance formula is used to calculate distance transform. These distance values are computed for each pixel in an image, and a distance matrix is created. It is a parameter for the watershed transform.

import numpy as np

import cv2

from matplotlib import pyplot as plt

img = cv2.imread(r'C:/Users/omkar/BUGGY_PROGRAMMER/flower.jpg')

b,g,r = cv2.split(img)

rgb_img = cv2.merge([r,g,b])

gray = cv2.cvtColor(img,cv2.COLOR_BGR2GRAY)

ret, thresh = cv2.threshold(gray,0,255,cv2.THRESH_BINARY_INV+cv2.THRESH_OTSU)

plt.subplot(211),plt.imshow(closing, 'gray')

plt.title("morphology2Ex:Closing:2x2"), plt.xticks([]), plt.yticks([])

plt.subplot(212),plt.imshow(sure_bg, 'gray')

plt.imsave(r'dilation1.png',sure_bg)

plt.title("Dilation1"), plt.xticks([]), plt.yticks([])

plt.tight_layout()

plt.show()

plt.subplot(211),plt.imshow(unknown, 'gray')

plt.title("Unknown"), plt.xticks([]), plt.yticks([])

plt.subplot(212),plt.imshow(img, 'gray')

plt.title("Result from Watershed"), plt.xticks([]), plt.yticks([])

plt.tight_layout()

plt.show()

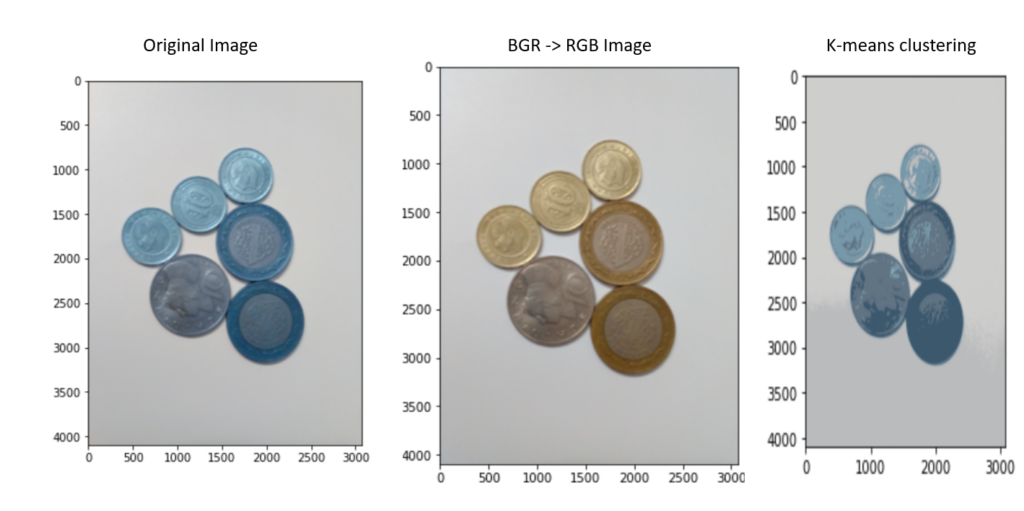

Clustering-Based Method

Clustering algorithms, as contrasted to Classification algorithms, are unsupervised algorithms in which the user does not have a pre-defined set of features, classes, or groups. Clustering algorithms aid in the extraction of underlying, hidden information from data, such as structures, clusters, and groupings, which are typically unknown from a heuristic standpoint. Clustering techniques divide the image into clusters or disjoint groups of pixels with similar properties.

In the clustering-based approaches proposed, some of the more efficient clustering algorithms, such as k-means, improved k means, fuzzy c-mean (FCM), and improved fuzzy c mean algorithm (IFCM), are widely used.

Because of its simplicity and computational efficiency, clustering is a preferred and widely used method. The Improved K-means algorithm can reduce the number of iterations in a k-means algorithm. In our case, the FCM algorithm allows data points (pixels) to belong to multiple classes with varying degrees of membership. Improved FCM overcomes the slower processing time of an FCM.

from sklearn.cluster import KMeans

import numpy as np

from matplotlib import pyplot as plt

import cv2

img = cv2.imread("C:/Users/omkar/BUGGY_PROGRAMMER/cluster1.jpg")

vectorized = img.reshape((-1,3))

kmeans = KMeans(n_clusters=5, random_state = 0, n_init=5).fit(vectorized)

centers = np.uint8(kmeans.cluster_centers_)

segmented_data = centers[kmeans.labels_.flatten()]

segmented_image = segmented_data.reshape((img.shape))

plt.imshow(segmented_image)

plt.pause(1)

Implementation of image segmentation using python

This is neural network implementation using segmentation models and Keras

import segmentation_models as sm

import keraskeras.backend.set_image_data_format('channels_last')

"""Created segmentation model is just an instance of Keras Model,

which can be build as easy as:"""

model = sm.Unet()

model = sm.Unet('resnet34', encoder_weights='imagenet')

Change number of output classes in the model (choose your case):

model = sm.Unet('resnet34', classes=1, activation='sigmoid')

model = sm.Unet('resnet34', classes=3, activation='softmax')

model = sm.Unet('resnet34', classes=3, activation='sigmoid')

model = Unet('resnet34', input_shape=(None, None, 6), encoder_weights=None)

import segmentation_models as sm

BACKBONE = 'resnet34'

preprocess_input = sm.get_preprocessing(BACKBONE)

"""load_data is camvid image dataset"""

x_train, y_train, x_val, y_val = load_data(...)

x_train = preprocess_input(x_train)

x_val = preprocess_input(x_val)

model = sm.Unet(BACKBONE, encoder_weights='imagenet')

model.compile(

'Adam',

loss=sm.losses.bce_jaccard_loss,

metrics=[sm.metrics.iou_score],

)

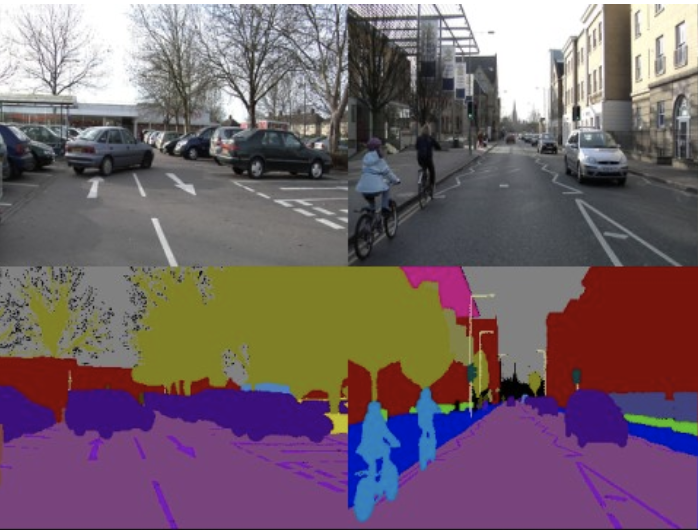

Here, we have seen Camvid dataset image segmentation:

Which image segmentation Method is better?

Obviously, it depends on the situation. A method cannot be judged as the best among the existing ones. Automatic image segmentation that meets your needs is a difficult task for a computer because it does not know which segment is of interest to you. It is determined by the image to be segmented and the meaningful information to be retrieved. There is no such thing as a One-Shot algorithm for image processing.

Commonly used image segmentation techniques are:

Image Otsu method and K-means Clustering Region growing algorithms Edge/Contour/Corner based approaches such as Canny, Hessian Matrix Watershed based algorithms e.t.c

Conclusion

In this article, we made a discussion on what is image segmentation, types of approaches, why to use and their benefits, and implementation of image segmentation using OpenCv, Sklearn,scikit-image in python using Keras. Image Segmentation and its different techniques are used in various fields such as Medical Imaging, Self-Driving Cars, image processing, and computer vision. We understand that image segmentation is heavily reliant on characterization, visualizing the region of interest in any image. Feel free to ask any questions or doubts on this topic, if anything needs to add maths or any details further, and share your experience and thoughts.

Also, read about -> what are layers in CNN?

Thanks for reading this article!

Data Scientist with 3+ years of experience in building data-intensive applications in diverse industries. Proficient in predictive modeling, computer vision, natural language processing, data visualization etc. Aside from being a data scientist, I am also a blogger and photographer.

- Aman Kumar

- Aman Kumar

- Aman Kumar

- Aman Kumar

- Aman Kumar

- Aman Kumar

- Aman Kumar

- Aman Kumar

- Aman Kumar

- Aman Kumar

- Aman Kumar

- Aman Kumar

- Aman Kumar