Decision Tree vs Random Forest is a topic that is often debated in the machine learning community. Both algorithms are supervised machine learning algorithms and are used for both regression and classification tasks. However, both algorithms differ in the way they work and also in terms of performance. They have their own pros and cons. Some people prefer Decision Trees and some prefer Random Forest to Decision Trees for their models. In this article, we shall see the comparison between Decision Tree and Random Forest and also discuss which one should be used in certain instances.

Overview

- Decision Tree?

- Random Forest?

- Decision Tree vs Random Forest: Which one is better?

- Decision Tree vs Random Forest: When should you use each algorithm?

- Conclusion

Decision Tree

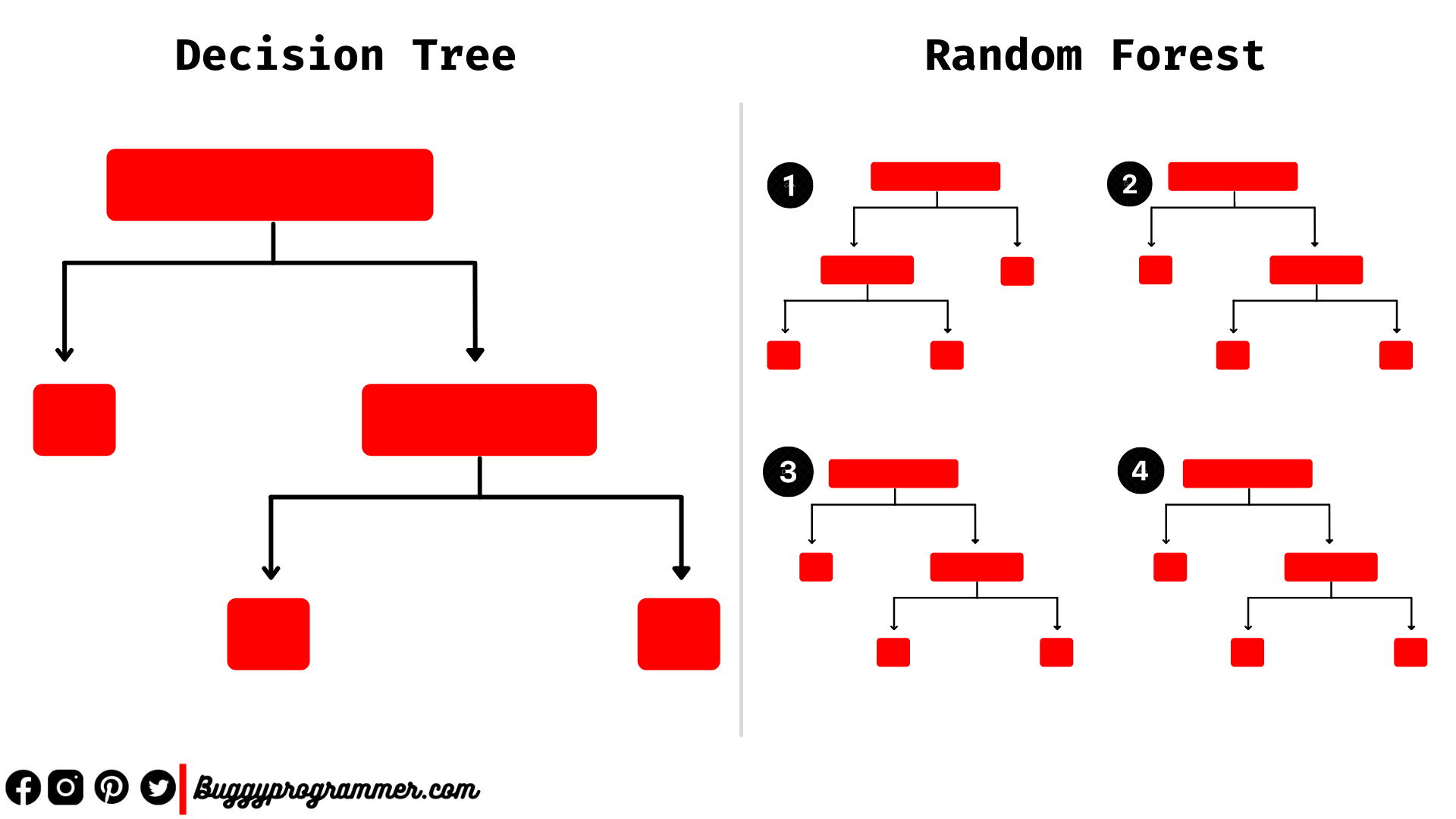

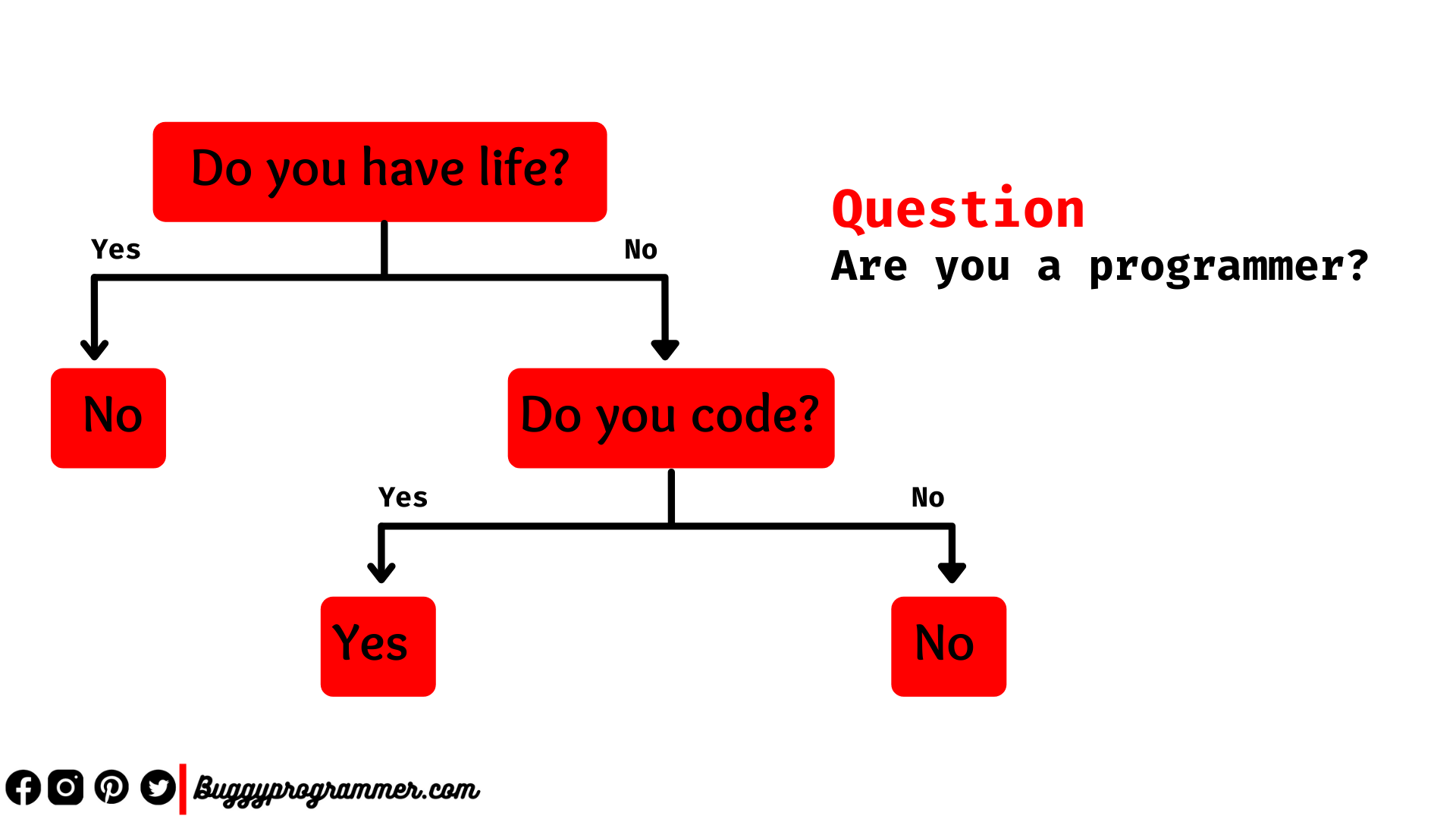

Decision Tree is a supervised machine learning algorithm that is used for both classification and regression tasks. It is a tree-based model and works by constructing a model in the form of a tree structure with decision nodes and leaf nodes. Each decision node consists of two or more branches and each leaf node represents a decision. The topmost decision node is called the root node. Decision trees can deal with both continuous and categorical data.

Advantages

- There is no need of much standardizing or normalising data.

- It can handle both continuous and categorical data.

- It takes less computational time

- It also provides clear visualizations at node level.

- It is very simple and easy to understand.

- Also this algorithm provides feature importance for classification.

Disadvantages

- It consumes more memory.

- It is prone to overfitting and and gives more erros.

- It also shows bias and variance.

- Reproducibility is very low.

Random Forest

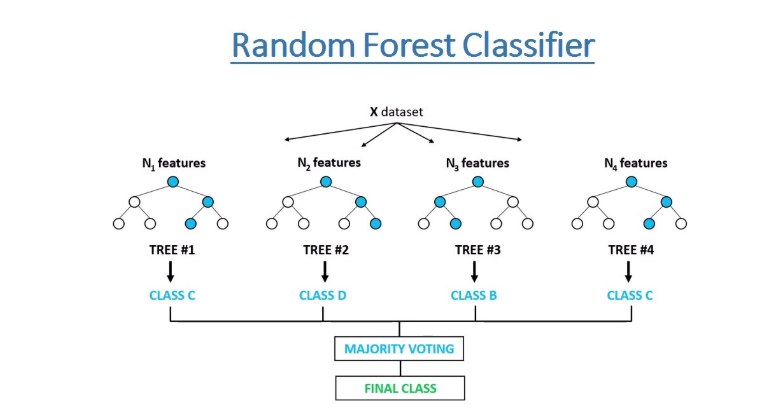

It is an ensemble of several decision trees generated on randomly split data. A group of trees is called a forest. Since these forests are built on randomly selected data this algorithm is called Random forest. Each individual tree depends on the random sample that is generated independently using several parameters like information gain etc.,

Advantages

- It works both on regression and classification tasks.

- It doesn’t cause overfitting problem.

- It gives high accuracy.

- Suitable for large data as dataset is into random samples for training.

- Handles missing data easily.

- There is no need for normalization of data.

Disadvantages

- It takes more computational time as several decision trees are constructed.

- It is complex to interpret.

- This algorithm has limited capabilities when it comes to regression tasks.

Decision Tree vs Random Forest: Which one is better?

Now that we have seen the brief working of both algorithms let us evaluate these two algorithms and see which one is better. To do this we will create two models, one with a decision tree and the other with a random forest. After creating these two models we will compare the results and see which is better. We will create these two models using the Pycaret library.

Pycaret is a low code autoML library that is used to build and experiment with machine learning models. It also offers a hub of datasets from where we can use sample datasets. We will use one such dataset to see Decision Tree vs Random Forest. Firstly, we will install the Pycaret library using the pip command.

pip install pycaret

Now we will import the necessary modules

from pycaret.datasets import get_data from pycaret.classification import *

Now that we have imported all the necessary things let’s import the credit dataset, which is about classifying loan defaulters, and create a model.

data = get_data('credit')

exp1 = setup(data = data,

target = 'default')

We imported the dataset and we created an experimental setup of our training environment. Let’s create a decision tree model.

dt = create_model('dt')

The output of the above command will look like below

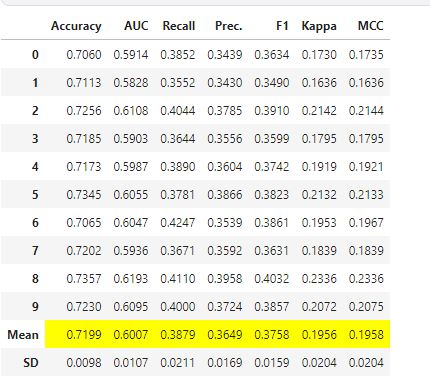

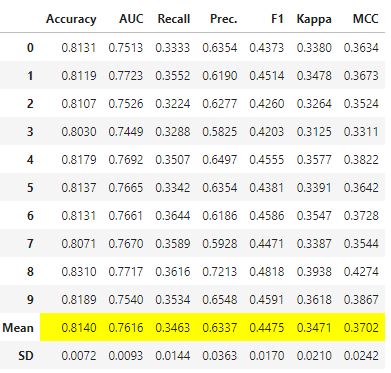

Our decision tree model produced an accuracy of 71.99%. Now let’s evaluate this decision tree model and get various plots which we will use to compare later.

evaluate_model(dt)

It produces an HTML rendered interactive graph display where we can see many plots.

Now let’s repeat the same process with Random Forest.

rf = create_model('rf')

Here Random Forest model produced an accuracy of 81.40%. Let’s evaluate to get graphs.

evaluate_model(rf)

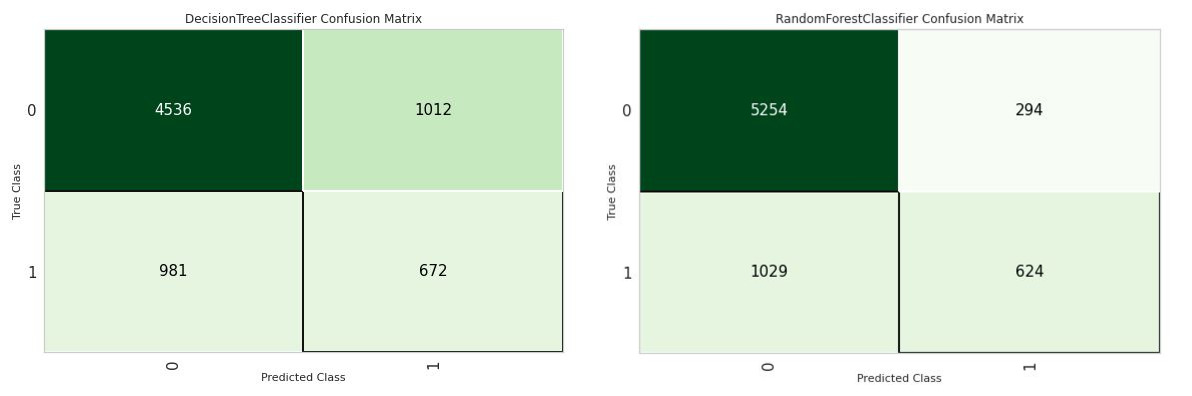

The above command produces an interactive widget display to see graphs. Let’s see a Decision Tree vs Random Forest graph analysis. First, let us compare the confusion matrix of each algorithm.

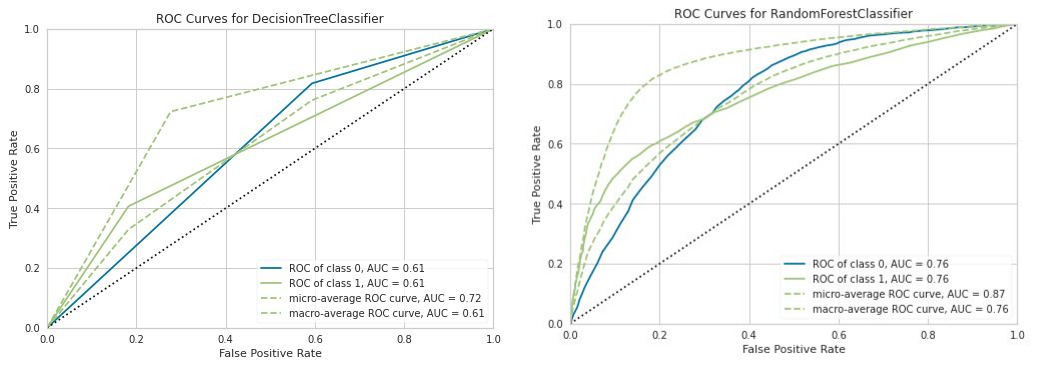

From the above image, we can clearly see that Random Forest outperformed the Decision tree in classifying individual labels. Now, let us see the ROC curves

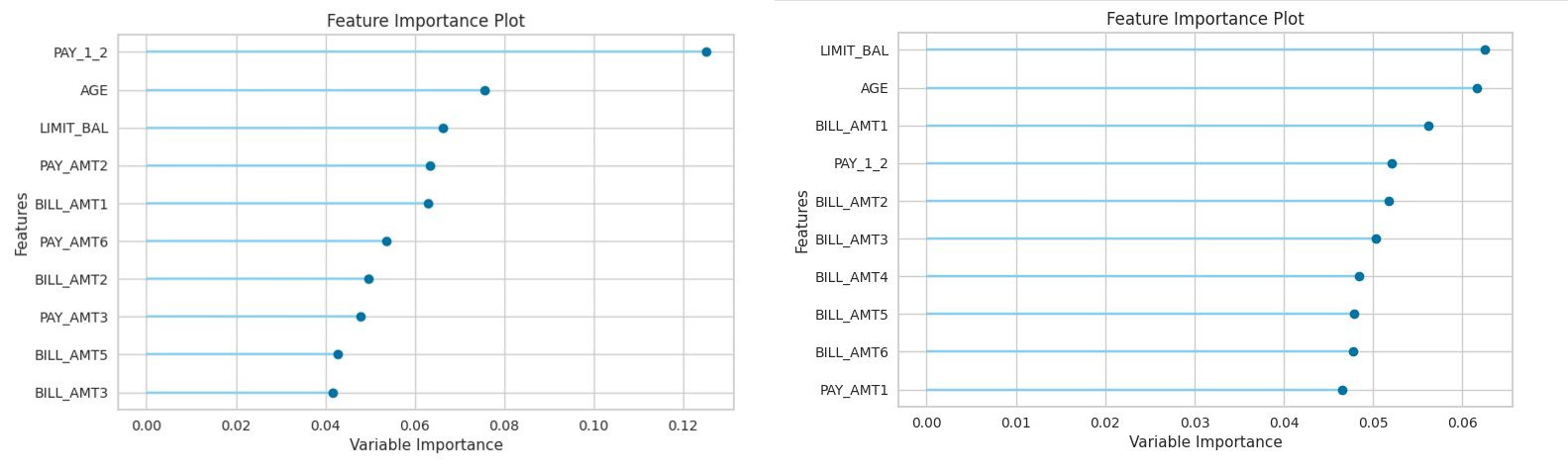

We can clearly see the area under the ROC curves of Random Forest is higher than the area under ROC curves of Decision Trees. Now let’s see the feature importances created by both algorithms

The left side graph is of the Decision tree and the right-side graph is of Random Forest. We can clearly see that the Random Forest algorithm has shown the importance of many features in the dataset better than what the decision tree has shown. To sum up, the performance of the Random Forest algorithm is better than the Decision Tree in all aspects. A brief overview of the main differences between the two algorithms is shown below

| Random Forest | Decision tree | |

| Interpretability | Hard to interpret | Easy to interpret |

| Accuracy | Highly accurate | Accuracy varies |

| Overfitting | Less likely to overfit data | highly likely overfit to data |

| Outliers | Not affected by outliers | Affected by outliers |

| Computation | Computationally intensive | Computationally very effective |

Also see: Pytorch vs Tensorflow

Decision Tree vs Random Forest: When should you use each algorithm?

Though Random Forest performs better than Decision Trees interpretability is a major concern. So if interpretability is not an issue for you, you can use the Random Forest algorithm. Otherwise, if the dataset is very small and you want good interpretability within less time, then you can use the Decision Trees algorithm. In the end, trying both algorithms to check the performance on the dataset is a wise idea as all datasets are not the same.

Conclusion

In the above discussion, we have seen Decision Tree vs Random Forest evaluation and comparison and found that Random Forest is more effective than Decision Tree. Random Forest is an ensemble technique and is one of the widely used machine learning algorithms. For further readings on this algorithm please go through this tutorial.

Data Scientist with 3+ years of experience in building data-intensive applications in diverse industries. Proficient in predictive modeling, computer vision, natural language processing, data visualization etc. Aside from being a data scientist, I am also a blogger and photographer.

- Aman Kumar

- Aman Kumar

- Aman Kumar

- Aman Kumar

- Aman Kumar

- Aman Kumar

- Aman Kumar

- Aman Kumar

- Aman Kumar

- Aman Kumar

- Aman Kumar

- Aman Kumar

- Aman Kumar